The Frontier audio team joined forces to give you the impressively detailed behind-the scenes story on how they made the dinosaur sounds, interface sounds, interactive music system, and so much more for the critically-acclaimed Jurassic World Evolution game.

In Frontier Development‘s Jurassic World Evolution game (available on PS4, Xbox One, and Steam), you get to try your hand at bringing John Hammond’s dream to life — i.e., creating a park filled with dinosaurs and tourists. I mean, what could go wrong in bringing those two together, right? The game is part mad science (you get to bioengineer dinos and alter their DNA), part resource management, part world-building, and part sinister fun, as there are no rules against letting your dinos loose on unsuspecting park visitors.

The dinos look and sound amazing, everything you’d expect from the Jurassic Park/World franchise. The game sound ties into the films’ sound, so the beloved roars of the T. rex, Indominus Rex, and the newly designed Indoraptor are all part of Jurassic World Evolution. Since the game’s release actually preceded the release of Jurassic World: Fallen Kingdom, it was essential for Frontier’s game audio team to be in constant communication with Universal Studios regarding the Indoraptor’s sound.

But the Indoraptor is just a small piece of a very large sound puzzle. It truly took a village to create the sound of this dino-inhabited microcosm. Members of the Frontier Jurassic World Evolution audio team include: Head of Audio Jim Croft, Lead Audio Designer Matthew Florianz, Senior Audio Designers Duncan Mackinnon, James Stant, and Dylan Vadamootoo, Technical Audio Designer Stephen Hollis, Lead Audio Programmer Will Augar, Senior Audio Programmer Ian Hawkins, Audio Programmer Jon Ashby, Audio Test Engineer Sam Doyle, Audio QAs Robin McGovern and Christopher Jackson, Additional Audio Designer Pablo Cañas Llorente, Music & VO Supervisor Janesta Boudreau, and Composer Jeremiah Pena.

Here, members of the team share all their secrets about what went into creating the game’s sound.

• Aesthetic approach, matching the film franchise

• Creating the dinosaur sounds, recording and manipulating sounds

• UI sound design

• Challenges and changes

• Final thoughts on the process

Aesthetic Approach:

Overall, what was your direction for sound on Jurassic World Evolution? How did you want sound to contribute to the game experience?

Matthew Florianz (MF, Audio Lead): In all our games — Elite Dangerous, Planet Coaster and Jurassic World Evolution, we treat sound direction as part of our creative, technical, and project-planning considerations. It’s important to map out the creative boundaries of a project in terms of technical and planning feasibility. This is certainly something that’s a little different now from fifteen years ago, when sound design was mostly only about making sounds.

That might not be an obvious focus at the start of a project but it helped us identify bottlenecks. We don’t want to get bogged down by technicalities when other departments have perfected their pipelines and are producing their assets at an accelerated rate.

[tweet_box]The Secrets to Creating Jurassic World Evolution’s Excellent Game Audio[/tweet_box]

Jim Croft (JC, Head of Audio): Equally, we don’t want to get blocked by another department in the pipeline and so the start of a project is a great time to look at our own pipeline improvements. Audio design is traditionally always the last development stage before going to QA (Quality Assurance). With systems and assets from other disciplines invariably coming in hot at the end of the development cycle, we are always on the lookout for ways we can frontload our work and have our team contributing from as early as possible in the process.

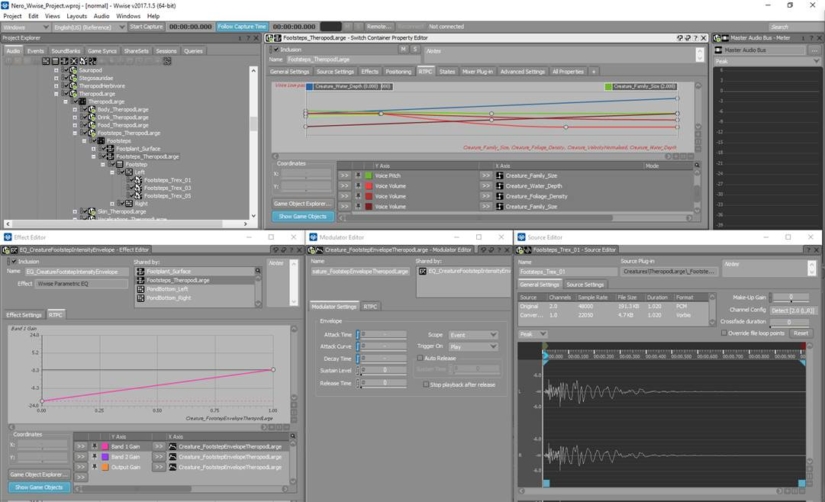

Frontier games are mostly set in open worlds, allowing player control over the camera and often allowing players control over the world’s content and layout. There is little time for predicting things for the sake of audio and worlds can scale drastically. Wwise (the sound-engine middleware we use for implementation) tends to favour the standard model of distance-based attenuation as a means of assigning priority. That’s not always the best way to figure out what should and shouldn’t contribute to the mix. Will Augar (Lead Audio Tech) can talk about our solutions with regards to our games in more detail later.

We have learned over the years that if you create the right systemic environment, you can push the boundaries as much as you like creatively

The point is our tech and the resulting implementation influences how the game sounds. Of course, our technical direction is still derived from creative questions, such as, "What happens if we try this?" But for our games, creative possibilities tend to be framed in a technical context, rather than by a purely creative imagination (as wonderful as that can be). We have learned over the years that if you create the right systemic environment, you can push the boundaries as much as you like creatively and not have to worry so much about blowing voice count budgets or CPU cycles, because everything is ring-fenced and managed by context.

MF: It’s a long introduction to a question about sound direction but it’s so important to phrase our answer in this context. It’s a big part of being a sound designer (particularly at Frontier) that’s not talked about often.

Regarding creative direction and the choices we made, in a simulation and management game there’s always the question of what the player is. Are they a godlike figure? Are they represented in the park that’s created? Is it all happening in the imagination? There’s various ways of framing the actions of the player that contextualizes them inside or outside of the created world, and deciding on a consistent approach is beneficial to audio direction.

In Jurassic World Evolution, those discussions kept coming back to one thing: location. It’s very important to present and experience audio in a visual context — ‘in world,’ as it were. Not only is that the environment that players interact with, it’s also what they create. We identified several distinct locations/viewpoints:

- Observing (vehicles, dino cam) > cinematic / third-person close-up.

- Creation (paths, terrain, building) > isometric with a free camera.

- Planning (navigation) > ‘eye in the sky’ top down.

- Map mode > a schematic overlay.

- Management > a ‘command centre’ UI-driven interface to manage dig sites, dinosaur DNA, etc.

Furthermore, characters like Ian Malcolm (Jeff Goldblum) and Cabot Finch (Graham Vick) will communicate with the player from ‘somewhere off-island.’

Taking all of that in context, we contextualize that the player is in an unseen location which resembles the command centre from the Jurassic World movie. So even though the earlier mentioned viewpoints are different in terms of experience (first person, overview, 2D screens, etc.) we have a direction for audio to tie all of it together. We’ll reference throughout the interview how this affected audio creation.

Everything gets parameterized. This approach puts the player first and allows them to feel that their input has an immediate effect on the audio in the world.

JC: A primer to our approach is ’emphasise change.’ In other words, as much as possible, we use game data to drive audio, which is what we learned from working with Lead Audio Designer Joe Hogan on spaceship design in Elite Dangerous. Everything gets parameterized. This approach puts the player first and allows them to feel that their input has an immediate effect on the audio in the world. Anything that doesn’t change over time or doesn’t represent the changes a player makes is considered relatively unimportant in our mix. That’s one of the reasons we don’t do looping background music and why all our ambiences are created with lots of panning, distance, and movement in them.

MF: Finally, let’s talk about the star of the show: the dinosaurs. Right from the start we agreed we’d mix the game around their presence. A close-up roar is the loudest sound in game and within a dinosaur hierarchy; all sounds are referenced to that roar in terms of LUFS-based loudness targets — -23 LUFS for a snort for example, and all the way up to -12 LUFS for the roars themselves. Dinosaurs also contain most of the low-end content.

The game release preceded the film’s release, so when it came to designing sound for the new Indoraptor, how did you know you were on the right track?

MF: We’ve had an excellent dialogue with Universal. They have been with this franchise for over 25 years and know who the fanbase and audience are. They’ve kept us up to date with in-progress scripts, trailers and artwork and sent us down-mixes of Jurassic World: Fallen Kingdom audio as it was being created by the team at Skywalker Sound (Gwendolyn Yates Whittle, Al Nelson, Pete Horner and Christopher Boyes). This was great for understanding the sound direction of the Indoraptor but we still needed to understand a little more about how it used those sounds in its behaviours.

Duncan MacKinnon (DM, Senior Audio Designer): A representative from Universal brought along some early Indoraptor footage. I’d imagined the footage might be on an encrypted flash drive in a locked briefcase handcuffed to his arm, but of course it was streamed from their servers. The animators had previously been sent a walk cycle and high-res models, so we had a vague idea of how the Indoraptor moved.

To prepare, I made a list of all the sounds Universal had sent us, with detailed descriptions of each one and how and where I thought they’d be used. I tried to link the sounds with the behaviours we would be having in game — hunting, fighting, eating, etc. I made sure all the stems were edited and named properly, so that I could reference them in my notes and be sure I was linking the sounds with the right behaviours.

We saw the footage three times, and I made frantic notes, and drew what the waveforms of some sounds would look like. This was actually quite useful in conveying a sound’s energy. I took note of one sound in particular — a creepy guttural hiss sound the dinosaur made when creeping about. It is a very recognisable and unique sound that is a reference to the genetic makeup shared with Indominus Rex. It’s much easier to make the dinosaurs sound exciting when they are doing exciting things. I knew this would be a very useful sound to use when the dinosaur was less active.

The animation team did an amazing job in making the in-game Indoraptor look and feel right. There were so many movements from the test footage that showed up in the in-game animations. In particular, there is this creepy muscle-spasm/shake movement that adds to the evil craziness of the Indoraptor. It’s not an instantly noticeable characteristic, but picking up on details like these really sells the dinosaur.

MF: Even before we were shown the test-footage from Universal, the mock-ups that Duncan had created from earlier received Indoraptor down-mixes were quite accurate. He had created so many dinosaurs before (being the Creature Lead on this project) that he had instinctively used the down-mixes in the correct way.

DM: It’s also a testament to the quality of the sounds created by Universal, the amazing animators we have at Frontier, and the detailed, thoughtful way we work in audio that made it sound so good.

https://www.youtube.com/watch?v=Itb3bwH2o5s">https://www.youtube.com/watch?v=Itb3bwH2o5s

Creating the Dinosaur Sounds:

What about the other dinosaur sounds? How did you create those to be in line with the existing Jurassic Park/World dino sounds? (The T. rex roar is awesome by the way! Nailed it!)

MF: [laughing] On behalf of Gary Rydstrom, thank you! Skywalker Sound created that recognisable creature language. It’s a legacy we have been given the opportunity to expand upon, so it’s really nice to hear that you like what we did.

We greatly respect the franchise and our community of Jurassic Park and Jurassic World fans. We all grew up with these films, so we didn’t plan to mess with the memory of watching these as our younger selves. I think that was obvious to the people we spoke with at Universal and this bought the entire team on the project a lot of trust.

Were you able to take creative license on the dino sounds? I mean, since you can mess with the dinos’ DNA that could actually affect their vocals, in theory…

MF: That’s a fun question and admittedly it terrified us for a little while. Post-processing on organic sounds is limited, at least with the tools available to us in the real-time domain. There’s some excellent development from companies like Krotos, Ltd. but for us that wasn’t a direction we could follow. Supporting over 40 dinosaurs (and the entire rest of the game) would be a fun challenge in itself.

The team was two sound designers, Jim and myself, three coders, a technical sound designer, and a test engineer.

JC: That’s a compact team for a project of this size, so we approached dino sounds pragmatically: one unique set per dinosaur family (such as breathing, footsteps, and some Foley and growls/groans) and once we had those we went more granular, focusing on unique calls for each individual dino genus. Furthermore, wherever possible, the dinosaurs that appear in the Jurassic World and Jurassic Park films had to sound like they do in those films. And so we endeavoured to use as much source from Universal and Skywalker Sound as possible.

We had to figure out our own ingredients (new animal source sounds) and ‘recipe’ (how they are combined) with which to complement existing creatures

Universal had provided us with extensive source for a number of film dinosaurs, such as T. rex and Velociraptor, but of course there were many gaps and some creatures are on screen in the films only briefly. Even the ‘stars’ wouldn’t have all the ‘behaviours’ we’d need to support. We had to figure out our own ingredients (new animal source sounds) and ‘recipe’ (how they are combined) with which to complement existing creatures or even create entirely new dinosaurs.

In terms of creative freedom, Michael Brookes (Game Director), Matt, and I had some initial conversations with David Price, Universal Games & Digital Platforms’ Senior Games Producer. He had worked on games before, so he understood our concerns in interactive media with regards to repetition and frequency. He saw our need to create ‘additional’ sound effects and to expand upon the Skywalker Sound libraries.

MF: So that’s the approach that we came up with. We had to prove it out. While Duncan worked on enriching the Raptor sound set, I worked on creating one for the T. rex. We experimented and iterated through various approaches: track laying, reverse engineering, and analysing. It was very inspiring to listen and react to each other’s solutions and unlocking new approaches together. Coming full circle, we both found that the more we added to Skywalker’s sounds, the more we felt it was wrong to mess with expectations and the language established.

Analysing the T. rex’s appearances in the films, we discovered that her roar has evolved over the 25 years she’s been on-screen. In the first film the roar has a musical quality that can be tuned to the third note of composer John Williams’ theme. While four films later, the roars have taken on a more animalistic character.

Having audio down-mixes from all the films, this evolution came in handy for our implementations. We ended up using the big ‘hero’ roar (Jurassic Park) when our T. rex did something she does in that film. We used the animalistic roars (Jurassic World) more frequently as they lent themselves better for more frequent use.

From analysing the T. rex Roar in Jurassic Park, I’ve learned that the film probably uses very little variation in source for the tonal component of the main roar, but does vary the transient and tails. Using the down-mixes as a baseline, similar sounding animal samples where sourced to recreate this approach. As the down-mixes had a baked transient and tail I figured this was something that could be replaced with more variations as the roar itself is entirely unique. I also added an additional (quiet) growl and rumble layer. All those additional sonic textures and creature-source material are the basis for the Large Theropod family of dinosaurs, the ones we had to make up ourselves.

In summary, my workflow for dinosaur sound creation started with Universal-supplied source for the T. rex and I figured out the holes. Next, I derived a set of ‘sympathetic’ animal sources to fill in holes or add variety. Then, I used our sourced animal effects to create a generic ‘family’-set of sounds (shared) and bridged the film dinosaur audio to our creations. This approach keeps the T. rex unique (and familiar) and makes sure that our new creations sound as though they’re coming from the same sonic universe.

I didn’t have much down-mix material to work from, so I found myself bringing a plastic trombone into work!

JC: That’s a little different to how I ended up working. With my Hadrosaurs, I didn’t have much down-mix material to work from, so I found myself bringing a plastic trombone into work! There has been some scientific research into the reason that this species are equipped with their slightly bizarre looking head adornments and one school of thought is that they acted like a resonant chamber, amplifying their calls to each other. I love this kind of informed storytelling. I wanted to try to reflect this across the whole Hadrosaur family. After much experimentation, I used the trombone without the slider and ‘played’ it through some air-conditioning ducting. I recorded more than 150 ‘takes’. 20 or so of these were used as source for my first dino, the Parasaurolophus. I manipulated it with pitch and layering and dynamically mixed it in various ways with sheep, goose, horse and sea lion sounds using a process developed by Duncan Mackinnon, of which he will go into more detail about later. It became the ‘signature’ sound for this family of dinos. For the rest of the Hadrosaur family, I kept the processed trombone and sheep as the modulator but swapped out the goose texture in the carrier, using animals like moose and camel, to represent the different genera within the family.

Tsintosaurus, is a genus of the Hadrosaurid family. This clip features Jim’s trombone/animal call.

You spoke about analysing the T.Rex; can you talk about the research?

MF: The wholeteam worked on dinosaurs and inevitably we all developed varying strategies. The most important rule for us was to use supplied Skywalker down-mixes first and only sparingly expand on/or reverse-engineer extra elements. So indeed, before we did any creative work, we tried to understand the language that Skywalker Sound had created for the films. Duncan MacKinnon researched Small Theropods (Velociraptors, Dilophosaurus) and he can share his findings and what went into the creation of those. With regards to the T. rex, here are some of the notes we took, specifically with regards to the roar heard on Jurassic Park:

"The Tyrannosaurus Rex" – scene from Jurassic Park (T. rex breaks through the fence).

- T. rex audio is present throughout the scenes (footsteps, rumbles, growls) even when not in focus (presence).

- Humans are always clearly audible but quieter than the T. rex.

- Animal footsteps are heavy, but don’t dominate in the mix (some of the lowest frequency noise).

- Sparingly used sub/low bass > bass and sub bass is gated or modulated.

- Transparent compression, short reverb/early reflection.

- No music, effects driven > careful spotting of music; comes in when remaining humans are in peril of being pushed over an edge, not from being stalked initially.

- Rain provides a low level noise floor > ambiences are muted.

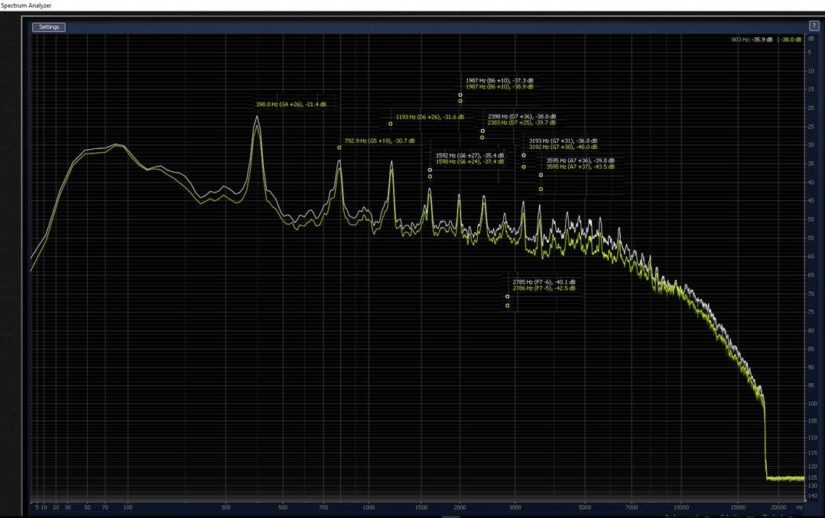

The roar is the most defining part of that scene and listening to it over and over again, two things stand out — a tonal quality and something else. In trying to understand it, I analysed the scene forensically in iZotope RX:

RX reveals:

- How dominant that T. rex roar is.

- The low-end impact density of the footsteps.

- There are Rorschach like patterns which are indicative of reversing/copy/pasting to extend source sounds.

- There’s some pitch shifting (very mild) going on and possibly some time-stretching.

- Calls use different attacks and tails but the same core "tonal roar" is used throughout.

- That seems to be in relationship to how near the creature is to the camera and how quickly a roar attacks/decays. A great approach with which to convey size and relative position to the camera, even if that perhaps wasn’t intentional, we could use it for our game!

Perhaps the manipulation indicates that even though they had only one good source-roar, they could use it multiple times by adding attacks/decays and manipulate it. There was, in short, a lot of interesting approaches and ideas in there and they told a story, which is now part of our in-game implementation. A little bit of reverse engineering.

The visual pattern which is also obvious is those horizontal lines, which indicate a tonal (musical) quality. A frequency analysis confirms this. It is musical, see below:

The sound design of Jurassic Park reveals a similar ‘musicality’ throughout all the creature design.

Were there any audio tools that helped you manipulate your recorded sounds?

MF: When Duncan MacKinnon researched Raptor audio, he found that it was possible to mix and match the down-mixes we had received. We realised that the Skywalker assets had been EQ matched. They seemed to be deliberately geared to work together and that’s something we wanted to emulate.

DM: We had a lot of different sounds for the Raptors, which made them a great model for how the other sound sets could work. As a small theropod, and a famous film dinosaur, but not one that we had a lot of source for, the Dilophosaurus was a great place to start.

Going into this project I’d had a bit of experience working with animals on our previous Zoo Tycoon title, but I’d never created ‘imagined’ sounds for a creature. I went through a few different processes, using a very messy Cubase session as a sketchpad. My instinct was to start off pitching down and time-stretching various animals, mixing different creatures together and using lots of effects.

As I continued further down this route, at each step more of the source character disappeared. It ended up a frustrating mess of noise. I was banging my head on a brick wall. There’s a great GDC talk by Mick Gordon in which he says, "Change the process, change the outcome." It was time for some new ideas. I often find myself falling back to a favorite (slightly archaic) tool for inspiration; Camel Audio’s Alchemy.

I’d used its Morph feature a bit on Elite Dangerous for making fire sound like water. When morphing you take sound A and apply its characteristics (frequency content, volume envelope, etc.) to sound B. You end up with it sounding somewhere in-between the two. I chucked in some animal recordings, and got some interesting results with Alchemy, but as it’s now Mac only, and the morphing in it is (in all honestly) not a user-friendly tool, I needed something else.

Zynaptiq’s Morph 2 was the answer.

I was investigating making new sounds for the Dilophosaurus (the spitting dinosaur from Jurassic Park). I wanted something angry and aggressive. We had some cute bird-like whoops for her, and some harsh hissing growls and roars. I also had a chittery, nasty monkey sound that I liked so I chose that for the A signal. I took the bird-like whoop and used that for the B signal.

The way the sounds morph when moving the middle control around is incredibly intuitive; you get a dry sound at each of the corners, and a mix of Morph and X-Fade as you move the control around. It sounded like a Dilophosaurus whooping call but with an angry, aggressive character from the monkey. It was pretty clear that this was the tool I was looking for.

On a project this size, you always need an eye on how your pipelines scale.

I made a few variations quickly, and played them back-to-back with the Skywalker sounds. They had a similar expressive quality, but they didn’t sound quite right; there was an EQ mismatch between sounds made in the early 90s and my sound. My instinct was to reach for Q10 and by comparing waveforms and using my ears, try and match the EQ profiles. On a project this size, you always need an eye on how your pipelines scale. It was pretty obvious this approach would be a massive time-sink. I’d done some EQ matching as a mastering process on a mix years ago when I was trying to copy the sound of Jeff Buckley’s Last Goodbye, and I thought the technique could work well here.

I took my new Dilophosaurus sound and used one of the hissy growls from Skywalker as a reference. FabFilter instantly created the right frequency bands and filter types, and in about 1 second I had a very complicated 10-band EQ profile that I could not have set better myself! The sounds suddenly fitted perfectly with the Skywalker source, almost as if they were from the same sessions. Morph, then FabFilter, that was our dinosaur sound design process.

It was important to create a process that could be used by the other sound designers. I made a Cubase template file with multiple Morphs and all the right A and B tracks so designers could drop in their source, morph it, and then have the tracks ready to EQ match.

MF: On the subject of animal source "recorded sounds," we read every interview and watched ‘behind the scenes’ documentaries to source appropriate assets from our own sound libraries. Each one of us took on a dino-family and we tried to keep the source unique within each family. Whenever one of us found a sample that sounded like something that might have been used in the films, we’d inform each other. Throughout production, our understanding of what the different dinosaurs "should" sound like grew, until we developed our own sensibility and became fluid in augmenting Skywalker Sound’s established language.

Duncan, Jim, Dylan, and James took the bulk of work on them with Duncan fitting the role of ‘Creature Lead’ quickly and naturally. He’s really done a fantastic job on his creatures; the whole Jurassic World Evolution development team has! It’s been such a pleasure working closely with production and the wider dinosaur team; we got great support from those guys. Audio is a downstream department obviously, but the way we’ve been supported and included early on by those guys has been fantastic. I’m very proud of how our dinos look, move, and sound!

For those curious, here are some of the animals we used as source in our dinosaur audio (the "ingredients" as Jim called them):

Large Theropods: Horse, Pig, Elephant Seal, Lion, Elephant, Otter, Orangutan and Ocelot.

Small Theropods: Vulture, Small Monkeys, Human Voice

Ankylosauridae: Seals, Walrus, Wild Boar.

Stegosauridae: Deer, Hippo, Mule, Panda, White Rhino.

Ceratopsidae: Alligator, Camel, Human, Cow, Bear, Wolf, Walrus and Moose.

Sauropods: Humpback and Toothed Whales, Donkey.

Hadrosaurs: Trombone (Human), Goose, Camel, Sheep, Horse, Moose, Walrus and Sea Lion.

Theropod Herbivore: Small monkeys, Humboldt Penguins, British Birds, Crane, Horse

In addition to the vocalizations, you also have footsteps, breathing, and other dino-related sounds. Can you talk about the variety of sounds you needed to create for each dinosaur?

MF: Oh there’slots! The camera can frame things really close so it is important to go into a high level of close detail. We initially designed everything for close-up and added as much detail as necessary (and that was reasonable). Very large dinos have a skin layer, but on the medium to small ones that sometimes got in the way of footsteps. We share footstep sweeteners and water sounds between all of them (small, medium, and large). Fine detail sounds like lip smacks are shared across a family and distinct roars are unique per dinosaur.

Our code-based systems … decide what is important to hear. … It’s scalable, keeps the mix clean, and it also helps us keep performance in check.

JC: So we have all this lovely detail on a single dinosaur when zoomed in, but when we are zoomed out, we need to have less detail on each creature, so that there is enough room in the mix to hear signature calls for all dinosaurs in the park and the system isn’t swamped with too many now unimportant high-detail dino events. This leaves space to also describe other more ‘impressionistic’ elements that make up a park soundscape. Our code-based systems – and I’m super-proud of the work that Will and Jon have done on these, look at a data derived ‘context’ to decide what is important to hear, from any given viewpoint, instead of simply using good old distance attenuation. The amount of sounds being played remains essentially the same. It’s scalable, keeps the mix clean, and it also helps us keep performance in check. This is a huge step forward for us.

MF: Another way to keep our audio performant is that we decided to have a limit on memory to avoid having to stream sound banks. The budget (212MB/compressed) excludes any streaming assets like music, ambiences, or VO. We currently use less than 100MB for dinosaurs, which is quite small considering the amount of dinosaur assets (7,309 originals / 2.1GB uncompressed). That is, in part, achieved by using a default low compression setting as it’s better to encourage designers to increase compression quality when results sound bad than to scale back. Careful use of sample rate and stereo or mono assets further reduced memory usage.

We further kept our memory footprint lean by relying on Wwise implementation rather than increasing assets count. To give you an example: On footsteps we applied dynamic envelopes. The T. rex footstep audio is a transient impact, a ‘claw spreading’ middle (foot resting) and a long tail. When the T. rex moves around, a foot impact speed RTPC modulates an envelope (ADSR) that shapes an EQ. When she walks, the transient bit is faded and when she runs we fade away the middle and tail but keep the transient part. One sample, multiple uses. Similarly, our sweeteners for water, ground surface, and forest sweeteners use the same method and that saved memory and work in creating additional assets.

The T. rex bespoke sound-set has 197 sounds (58.6MB uncompressed) and shares sounds with the other Large Theropods for food, footstep sweeteners, and damage/impact Foley layers. Audio source is created using track-laying, automation of pitch, and lots and lots of EQs (Waves q10, ReaFirr and FabFilter). Distortion is used to excite sounds made dull from stretching. A bit of impulse response (10/30ms) ties the track laying together. reFuse’s Lowender adds a bit of sweetening to the low end and Sony’s Smooth/Enhance adds some gentle brightness (don’t laugh, try it :) ). Waves RCompressor and L1+ make up the master track for subtle compression. We additionally use MeldaProduction’s tools and one of their best features is the Randomize button, something I really miss on other plug-ins.

After rendering out all the sound assets, they are added to Wwise and implemented.

Those sounds are triggered using a Frontier tool called Motiongraph. It connects to the game and allows us to force any dinosaur to loop any animation. We can scrub through these in real time adding events and RTPC’s. With Motiongraph and Wwise connected to the game we can move the camera around and hear how our sounds react in context. So we can zoom in or out to hear how the close/distant sounds work together. An IK system triggers footsteps but everything else is hand-authored:

Dinos vs. Park-goers: the screaming, the roaring, the chomping! How did you want this experience to play?

James Stant (JS, Senior Audio Designer): Before we began, we knew that it was important not to jeopardise the game’s intended age rating. The light-hearted tone of work we did on Planet Coaster‘s crowds didn’t feel suitable for Jurassic World Evolution and yet we did not want to venture as far as blood-curdling horror screams and excessively gruesome bone crunches.

We began with a linear audio-only storytelling exercise; how do we intend to audibly describe a happy park transforming into catastrophe over the course of 60 seconds?

We began with a linear audio-only storytelling exercise; how do we intend to audibly describe a happy park transforming into catastrophe over the course of 60 seconds? Not only did this help us establish a desirable style for the walla, but it crucially encouraged us to look at the ‘bigger picture’ of how other elements tie in during such scenarios. For example, hearing a dinosaur escape roar influenced us to withdraw the ambience of birds and other wildlife, and introduce park-wide sirens and announcements, which in turn then marked a dramatic change in crowd mood. Through iteration of this aural storyboarding, we kept asking ourselves what we could do to invoke more change and the inspiration led to ideas such as the cycling multi-language safety announcements that ultimately made it through to the final mix.

Unsurprisingly, we had to conduct new group walla sessions which allowed us to cater to the different moods (happy/concerned/terror/excited) and roles (tourist/scientist/security personnel) of our guests. Our volunteering development team members braved the freezing conditions of the British winter and grouped together in the rear of our studio, wearing [non-noisy!] woolly hats and scarves! Trying to then encourage a chilly crowd of people to react to an imaginary dinosaur is no easy task. I would wield a clipboard and creep out from behind parked cars, as well as inviting crowd members to act out a dinosaur fight, as everyone else watched on in amazement/fear. Although the subject matter may be serious, we learned how important it is to inject a dose of fun into these sessions. Keeping energy and attention levels up for a duration of 90 minutes is challenging, so we kept our activities varied and occasionally spontaneous, keeping feedback as encouraging as possible. Also, running such sessions outside was essential; people were less conscious about raising their voice than if they were cramped up inside with headphones on and we didn’t have to worry too much about ‘worldizing’ the recordings. Of course, there is always wind and inevitable traffic noise to deal with, but iZotope RX works wonders for eliminating these.

With the grouped crowd audio covered, we cast our attention to the fine-detail crowd that comes to the forefront of attention when the player zooms right in to ground level. To cover the crowd in such granularity meant that we then had to carry out a number of individual sessions in our recording booth to capture close-level emotes for being eaten, jumping out of the way of 4X4 vehicles, pointing at attractions, etc. While this is one of the most time-demanding aspects, in terms of recording and editing time, we knew that having a plethora of varied reactions would allow us to fight fatigue and repeatedly complement any major animation activity.

UI Sound Design:

What was your goal for sound on the interface screens and menus?

MF: The first audio that a player interacts with, in any of our games, is the UI. So, it has to express what is core about the Jurassic World Evolution experience. Earlier we mentioned location as a context and the UI is an expression of that direction. Thomas Linthe (Lead UI developer) and Louise McLennan (Lead UI Designer) had been thinking about context too and in conversations with them we found that they had been similarly influenced by the command centre UI as presented in the Jurassic World movie.

UI – and I think Thom and Louise will probably agree – is such a core part of the identity of a game that it requires a sonic identity. On Planet Coaster, all UI source is derived from recording small objects: rubbing shot glasses, pressing on metal lids, folding cloth and paper, and so on. It’s a game about crafting worlds, so that’s something I wanted ingrained in how the UI sounds. For Elite Dangerous, there’s always an element of radio signals or electrical distortions in UI sounds because its two methods (radio waves, electromagnetism) we use to observe the universe.

The same method of approach was used on Jurassic World Evolution. I started out with mechanical and communicative sounds to represent the command centre. There are modems, old clocks, antique machinery, bleeps (Soundmorph’s Galactic Assistant is great for those) and button presses. I mixed and matched all of it as randomly as possible, random effects on random layers; throwing paint at a canvas. That resulted in three hours of material from which the happy accidents were selected. The source is in a separate Reaper project tab which is always open alongside the UI project. I was cutting and pasting between the two to keep track of what had already been used. As the game matured, the UI/UX team tweaked and refined the look and feel of the visuals and alongside them we kept refining UI Audio.

Jim took care of the reward sounds (he’s really good at that stuff!) which intentionally stands out from the interaction sounds. He used a little of my source to tie it together, but most of that is his. Can you imagine how great it is to be hired by the guy for whom the creation of rewarding sounds comes entirely naturally!?

In the Hammond Creation Lab screens, you can go into the hatching bay and create/modify new dinos for your park. There’s sonic feedback for all of that. How did you create those sounds?

UI developers tend to have a great ear for rhythm, pacing and weight so we iterate closely with them

MF: Thanks for noticing those Hammond Creation Lab Screens! That was a really fun thing to create audio for, to design those digitized animal sounds for each type of animal DNA. Those screens are designed to look like operating a machine and Jon Pace (Principle UI Developer) created such cool transitions that it was screaming out for a fun audio treatment too. UI developers tend to have a great ear for rhythm, pacing and weight so we iterate closely with them; sometimes they’ll change pacing for us and sometimes we change the weight and feel of a sound for them. It’s also important to add a bit of imagination to these types of UI screen interactions as it conveys that these are not just any buttons, but something a little more special.

I talked about iteration, and since release I’ve been able to add a few things to those screens, like movement audio for the DNA strand. Iteration is a really important part of making games, and one of the things I really like about working in Frontier’s audio department is having the room to explore what the feel of a game is. The end result of our UI is different from where we started out from. When we started, the audio for the game was ‘cleaner’– a little more bleepy, which isn’t a wrong direction if not perhaps a little obvious.

At one point, we were talking about music with David Bamber (Senior Designer) with regards to the frequency of disasters, and how that could possibly affect use of music. That conversation inspired the introduction of distortion and analogue elements in UI audio. Those are the kinds of sounds which convey the fragility of technology, which is such a core idea in the Jurassic novels, so it felt right to include it in the sonic identity. In Jurassic World Evolution even the UI doesn’t entirely sound like it will last. That’s another part of the illusion of control.

How have you modified the mixing in the latest patch? What did you change? Why?

JC: We mixed audio throughout the project but in the last month this intensified. Jurassic World Evolution is a large game in terms of possible combinations and different platforms (PC, PlayStation 4, and Xbox One) and we played through the game a number of times on each, making sure everything sat nicely together. Once the game was released, we watched a lot of gameplay videos and played the game extensively ourselves at home.

MF: Inevitably people will play the game in different ways and watching them play allowed a different perspective. The entire audio team made lists, invited feedback from the rest of the development team, and we came up with improvements derived from those. Some of the work is directly mix related. The release build sounds nice, but I think I ended up making it sound a little too brave. Some of the clever things we did are buried in subtlety which isn’t following our "emphasizing change" approach. We’ve made things like the "wind through grass on ground" shots more audible and when moving from the air down to ground level; that transition is much nicer now. The whole sequence is more responsive to where the camera is and what it frames. Rain interacts much more audibly with buildings or objects such as the air conditioning units. We’ve done passes on the vehicles to make them more responsive to player input and done an entire pass on the dinosaurs to make them stand out even more, especially at a distance. I think the mix is a little more reactive and exciting now.

JC: So it’s based on feedback really — stepping back for a few days and listening with fresh ears. It’s so amazing that we get a chance to do this now and it certainly makes us less nervous during the last stretch of a project. Back in the day, we wouldn’t have had that opportunity.

MF: Yeah exactly, like being able to change implementations based on community feedback and our own play-through. We can make an experience better after release now. A great example is we have contextualised some of the VO so it acts more deliberately as feedback when and where it is useful. Claire Dearing’s (Bryce Dallas Howard) lines about water needs of dinosaurs now triggers when selecting a thirsty dinosaur and not when a dinosaur in the park triggers its thirst need. The latter is only apparent if the stats are open which is when the line now triggers. That’s a nuance we didn’t manage for release and it is helpful feedback for players now that it is contextualised more.

JC: We’ve also increased the chances of some lovely music being played at an appropriate time in your park.

Interactive Music System:

Can you tell me about your approach to music in the game? How did you design your music system? And why is having interactive music (i.e. related to player input) more desirable than just having a continuous underscore?

JC: We are lucky enough to have Technical Sound Designer Stephen Hollis on the team to design and implement our music systems. Steve has a very musical background and is an accomplished musician and composer in his own right, but he can also code in C++. He’s the ideal self-iterator!

Stephen Hollis (SH, Technical Audio Designer): I think the time we had spent working on our previous game Planet Coaster had taught us a lot about how to approach designing a music system for a park builder. The player has free control over where the camera is and what it is pointing at, and can decide to change this entirely in a very short space of time. What you end up with is a very large, continuously changing dynamic range that you then have to fit music into. Finding a solution that doesn’t involve the music cutting in and out constantly can be quite a challenge, but luckily this time we had a head start.

Another discovery we had made during our time on Planet Coaster was our composer, Jeremiah Pena. Matthew found him while listening to portfolio websites and he really stood out to us. He did a great job with some of the orchestral pieces in Planet Coaster, so we felt he made an excellent fit for Jurassic World Evolution. The experience of working with him has been nothing short of a pleasure; he wrote over two hours of music for the game in total, masterfully taking inspiration from the original John Williams, Don Davis and Michael Giacchino scores and blending it with his own unique style. He has a bright future ahead of him and I believe his music speaks for itself.

We prefer to create a large quantity of simple rules that, when combined, form a larger complex system.

One key part of our design philosophy when approaching the music system was to avoid one-to-one relationships between game events music starting/stopping. Simply put, when a player begins to realize that when they do a certain thing then music always starts; suddenly they’ve seen behind the curtain and the magic is lost. Instead we prefer to create a large quantity of simple rules that, when combined, form a larger complex system. The result is music playing at times that feels organic, which is much more satisfying for players. There are one or two notable exceptions where we take a more linear approach, such as releasing a dinosaur, but a large part of our music works in this way.

Another key design philosophy is that once we’ve made a musical decision, we try to commit to it. One sure-fire way to take a player out of an experience is to tie musical decisions far too tightly to what is happening right now in-game. Say a piece of background music begins, and a few seconds later the player goes into a menu that usually has music; dropping the background music at this point in favour of the menu music better represents the moment to moment actions of the player, but results in a much less satisfying experience as music cuts in and out and never has a chance to grow. We prefer to look at the larger picture to try to express the player’s story through music, rather than necessarily live in the moment all the time. If we move from one mood to another, instead of instantly switching the music track we may begin to fade out the music in layers over a longer period of time, until it fizzles out and is replaced with the new mood. This method is a lot less jarring, and the player may not even notice it is happening at all. There are, of course, exceptions to this rule, too. For example, we decided to transition away from Tension music and straight into the Control Room music when a player moves into this screen whilst a disaster is happening, primarily to provide the mood of a safe place that the player can always access when things run awry.

How we come up with the rules that govern this system is a fun process! We start by implementing the simplest system we can think of, usually by triggering music every X minutes, and then ask everyone on the team to comment when they feel that the music worked particularly badly or well. It gets everyone to contribute, and you start to build up an understanding of where music and gameplay clash. Usually you discover holes in the mood spectrum of your score when you do this, so we could catch these early on and report back to Jeremiah of a certain type of music that we were missing. We tackle each problem one by one, iterating over and over, until we’re left with a large system that can intelligently pick and choose the right piece of music for the right time, and remove it when appropriate. We like to think of it as an AI spotting algorithm, where a director and composer get together to pick the perfect time to accompany a film with music. Our algorithm attempts to do the same for a game. It’s not always perfect, but we hope it leads to a musical experience that will leave the player satisfied for hundreds of hours without getting fatigued.

MF: We have to also mention the music on the 4×4 vehicles! Using ‘location’ as a guideline for direction has been mentioned a few times in this interview and the ground vehicles are an example of how such a direction can manifest itself practically. Having music playing in the park is a great texture and generally, in the real world we don’t really hear music in our environments unless it is warm and we have our windows open. So to me there’s already a sense of temperature if you can hear music outdoors. It just doesn’t happen that much in winter! Furthermore, we focused our music selection on the Middle Americas and Janesta Boudreau (our music licensing specialist) hunted down and licensed Madacy Mariachi Band – Las Gaviotas which we used as a film-inspired guideline (and Easter Egg) for the rest of the music.

https://www.youtube.com/watch?v=WdrSM_BBXUU

Janesta found several libraries that charge for usage rather than per song, which allowed us to create a healthy selection of tracks, so if you ever wondered if it is worth having a music supervisor…

We have a night and daytime playlist and a Spanish-speaking DJ — our very own Pablo Cañas Llorente (Linear Audio Designer) which further conveys that this is an in-world station. It originates somewhere from in-world (presumably mainland Costa Rica), as such we can distort the signal when a storm is about to roll in, which is a subtle but great dramatic tool to reinforce the island’s isolated location in the ocean.

Challenges and Changes:

Technically, what was your biggest challenge for sound on this game? How did you tackle it?

Will Augar (WA, Audio Tech Lead): One of the main technical challenges on the project was how to manage the thousands of potential audio sources in the game world.

Each entity in the game world can have multiple emitters, each emitter playing multiple sounds. Busy scenes in Jurassic World Evolution can have around 2,000 audio sources on-screen, which is a lot of potential audio! The target platforms do not have enough CPU resources to play all the voices required for this number of audio sources. Even if they could, we would not want to hear this many voices at once; the mix would be far too cluttered.

Often in games the solution is just to play the nearest n audio sources. This works for some kinds of games. However, in the large, open world simulation of Jurassic World Evolution, this results in missing key pieces of audio. For example, when the T. rex roars it’s for an important reason so we want to hear her even if she is on the other side of the park.

Once the sound sources are categorised into general types such as dinosaurs or buildings, a priority recipe can be written using these properties and applied to each category.

The custom solution we developed allowed us to prioritize audio sources based on their in-game context. Simple, generic properties like distance to listener and attenuation range can be used alongside more context-specific things like, "Is the dinosaur a T. rex?" Or, "Is the dinosaur fighting?" Once the sound sources are categorised into general types such as dinosaurs or buildings, a priority recipe can be written using these properties and applied to each category. The system allowed us to take a really aesthetic-based approach to voice management that performs very well in terms of the CPU usage.

Another significant technical challenge we faced was for the environment audio. Environments in the game can be fully customised by the player; forests, lakes, and the terrain topology can be edited and sculpted in real time using a set of tools. This malleable landscape means that classic techniques of marking up static environment zones for each world would not be possible.

We use render masks to generate a low-resolution grid that divides the world up. The system looks at the render masks and calculates the ratio of different environment types, then determines the dominant type for each grid square. When the player edits the terrain, we generated a call-back for the squares that changed and updated the environment ratio using the render mask. This dynamic solution gives us the ability to automatically represent any environment created by the player and do so in real time!

Armed with this zonal information, we added multi-point emitters for zones of the same dominant environment type and set auxiliary sends for all emitters based on the zone the listener is in, providing dynamic, environment-based impulse-driven reverbs.

Final Thoughts:

What are you most proud of in terms of sound on Jurassic World Evolution?

MF: Watching people play the game as they expertly zip the camera around, navigate menus, observe dinosaurs and manage disasters – the audio experience is dynamic, satisfying and seamless. Almost every interaction is accompanied with something unique and the soundscape is varied even when user interaction is sparse.

There’s a beautiful moment when managing a crisis and the park is full of dinosaurs, helicopters and vehicle sounds — a real hustle and bustle. When the last dinosaur transport goes off-screen a tranquillity returns and it’s an emotional moment that feels like having been an observer in the park. It’s even more perfect when Jeremiah Pena’s lovely music comes in exactly at such a moment. That’s a moment that really resonates with me, feels real.

I am super-proud of the work the team did on tech, dinosaurs, buildings, terraforming, vehicles and music and the mix was the biggest challenge in terms of how we’d bring all those points of view together.

DM: I am delighted with all the dinosaur sound design we did, but especially so with the species that have never featured in the films. There is so much variety and imagination in each one, and they really stand up well against the Skywalker dinosaurs. Everyone saw the opportunity to do something original and creative, and the quality of the work reflects this.

Passing by these buildings…offered an embellished soundscape with a believable sense of life and activity emanating from what could otherwise be dull and soulless structures.

JS: We pride ourselves on the attention we pay to detail and this is nicely demonstrated by the work that went into the game’s building ambiences. We pursued quite a high degree of hyper-realism, as the player cannot move or see inside the buildings, but we still wanted to exercise storytelling and provide each with a unique sonic flavoring. This was not just limited to the main body of the building, as we would decorate individual modules such as air con units and communications towers by adding subtle layers of detailing with very tight attenuation radii. Passing by these buildings, either with the default camera or in a controllable vehicle, then offered an embellished soundscape with a believable sense of life and activity emanating from what could otherwise be dull and soulless structures.

JC: I think we took a much cherished legacy and did it some justice. Duncan did a superb job heading up the dino team. I love how all the different creatures have a distinct sonic identity. There’s so much depth and difference in there. Steve once again created and curated a beautiful music system that really presents Jeremiah’s fantastic score in its best light.

Personally speaking, I’ll always have a soft spot for my Hadrosaurs and having designed and implemented the helicopters and sky-cranes; I’m particularly pleased with how they turned out. There’s a lot of attention to detail there but thanks to Will and Jon’s sound map recipes, they do not break the bank!

This project was a huge team effort and I’m immensely proud of the way the entire game sounds and all the many contributions my team made to it. There’s great sound design in there built upon some very solid, well-designed systems. And I would have to single out Matthew’s contribution to the project. His creative vision and ambition were matched only by his technical propriety, a boundless energy and some very adept mixing (the man has great ears).

If you work with the best people, anything is possible.

A big thanks to Jim Croft, Matthew Florianz, Duncan MacKinnon, Stephen Hollis, James Stant, and Will Augur for giving us a look at the detailed and imaginative sound of Jurassic World Evolution – and to Jennifer Walden for the interview!