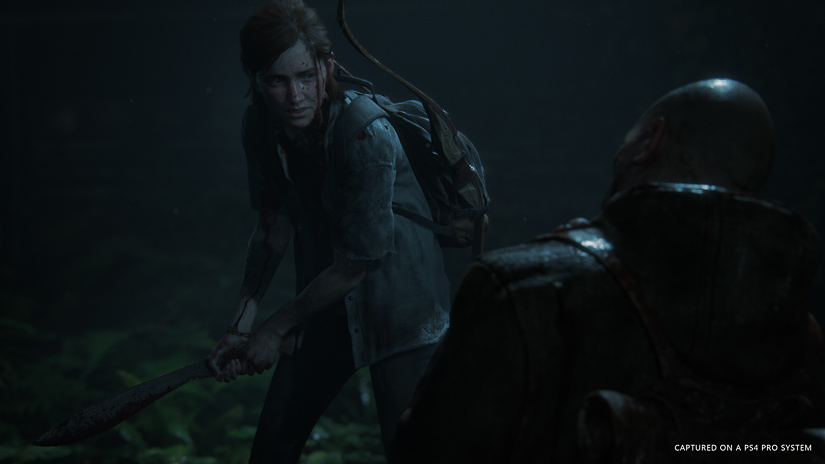

Even before its release, Naughty Dog’s The Last of Us Part II was winning awards: for ‘Most Wanted Game’ and ‘Most Anticipated Game.’ That much fan pressure had to make the game development team sweat. Could they make another hit like The Last of Us? Would the fans love it?

The answer is yes. They can. They did. The Last of Us Part II has officially become Sony’s fastest-selling game. The wait is over and the fans are feasting on Naughty Dog’s latest offering. And like its predecessor, the game is a feast for the mind, the eyes, and the ears. Audio Lead Rob Krekel (who was a sound designer on the first release, working under Audio Lead Phil Kovats) and his sound team at Naughty Dog may have had a daunting task in front of them, to build on the success of The Last of Us, but they more than delivered.

Here, Krekel, Justin Mullens (Sound Designer), Neil Uchitel (Senior Sound Designer), Maged Khalil Ragab (Dialogue Supervisor), Mike Hourihan (Supervising Dialogue Designer), Grayson Stone (Dialogue Coordinator) and Beau Jimenez (Sound Designer) talk about ways of connecting the player to the game through sound — by building reactive environments, by using extensive Foley, by designing human attributes into the various deteriorated stages of Infected, and by enveloping the player with a rich, well-balanced surround mix.

The Last of Us Part II – Official Accolades Trailer | PS4

Creative director Neil Druckmann on the first The Last of Us said, “The game’s audio was approached in a more ‘Hitchcockian’ way — more about the psychology of the story and using sound to support that.” Also, “don’t be afraid to be subtle. Be brutal, without being big.”

Was that the same approach for The Last of Us Part II?

Rob Krekel (RK,Audio Lead): That same DNA definitely still exists in the approach we took to The Last of Us Part II. Detail, subtlety, and hyperrealism were important pillars of this game’s soundscape. There are more moments and opportunities to go big in The Last of Us Part II, but we absolutely still retained the idea that not everything needs to be big to be evocative.

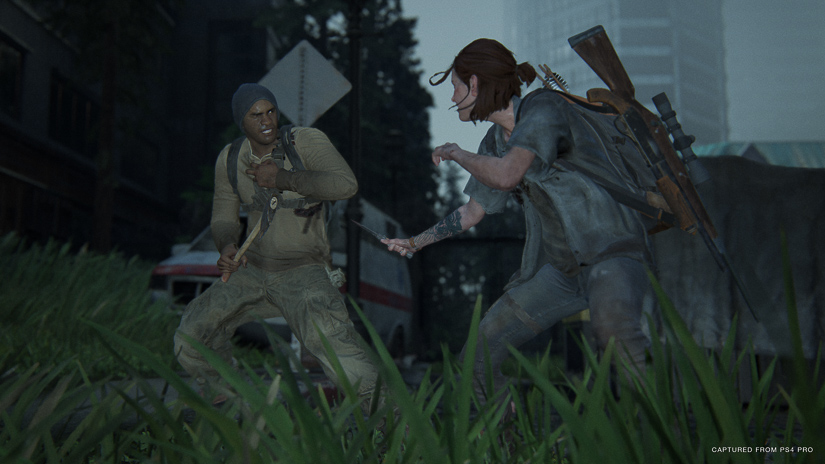

The game is a meditation on the cycle of violence that comes from revenge at any cost, so making things feel real and often unsettling was paramount in creating the desired experience for the player and having them connect to those themes in a certain way.

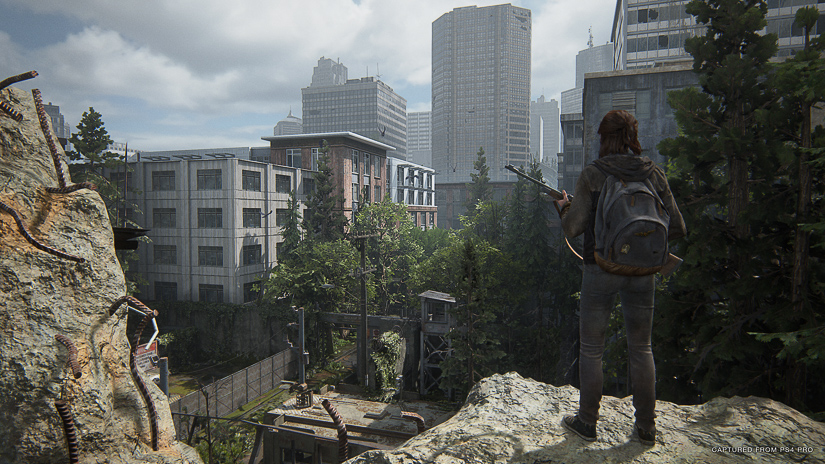

The Last of Us Part II is set in parts of the country the first game didn’t explore — with new seasons and climates from snowy mountains to the temperate forests in the NW — and the main locale being (what remains of) Seattle. Can you tell me about the designs for some of these areas?

Justin Mullens (JM, Sound Designer): Making a game in Seattle means one thing for sure – rain. And since about a third of our Seattle locations take place while it’s raining, it meant that this weather element needed proper attention early in production. We ended up spending a lot of time sitting in the rain.

We ended up spending a lot of time sitting in the rain.

We did our best to ensure accurate biophony and geophony per area based on the season, from Jackson, Wyoming to Seattle, Washington. Ultimately, we did end up recording and using elements from all over, as long as they had minimal animal noise and zero machinery or human “sound pollution” in them.

What feeling did you want the sound to impact on the player?

JM: While designing the ambiences for The Last of Us Part II, I spent a lot of time considering the “openness” or expansive quality of one space versus the dense or enclosing aspects of an opposite space. Just like in music, my favorite parts are the transitions from one section to the next. I paid particular attention to this flow from one kind of space into the different environments it connected to.

Just like in music, my favorite parts are the transitions from one section to the next.

I wanted open outdoor spaces to have a soundstage that felt wide and deep in contrast to the tight and claustrophobic interiors. Because of that, I spent a lot of time adjusting the mixture of elements in the sounds and the density of emitters in the level editor to support this movement as areas opened up. From an interior hallway that opens into a back alley, into a parking lot, and into an open field to areas that funneled down, such as a hotel lobby that leads into a hallway, down an elevator shaft, and through a collapsed tunnel into an infected sewer. Reverbs played a big role in further supporting these designed contrasts of dense versus open or active versus dead.

What went into creating those environments?

JM: There were a few major considerations that dictated the direction of ambiences in The Last of Us Part II. Being a sequel, it was very important to ensure, first and foremost, that the feel of the environments in the first game were carried forward, but with extra added fidelity and granularity that the newer technology and tools allowed. It involved wrapping the lineage of our ambience progression from Uncharted 4 and Uncharted: The Lost Legacy back into the aesthetic of The Last of Us. Since the locations in The Last of Us Part II were much more condensed than the globe-hopping nature of the Uncharted series, it allowed more time to focus in on building an environment that had almost all traces of human activity removed.

What were some custom recordings you captured for this game?

JM: The search for recording environments devoid of human activity was tough, as usual, and we are always on the hunt for locations that would allow us to record as much clean “air” as possible.

A recording trip to an abandoned mining town near Death Valley yielded a derelict train car, a partially destroyed concrete jail cell, the concrete husk of what I believe was previously a bank, some wood-framed shacks, and a rock-blasted mine shaft.

Stories in a setting like ours have the added challenge of being post-electricity most of the time, which means that both exterior and interior spaces require the same care when recording and choosing elements to cut together. A recording trip to an abandoned mining town near Death Valley yielded a derelict train car, a partially destroyed concrete jail cell, the concrete husk of what I believe was previously a bank, some wood-framed shacks, and a rock-blasted mine shaft. This area provided some incredibly quiet and “sparse” recordings that became the foundations for many of the interior spaces in the game.

Recordings we made around the city also played a big role, a bunch of emitters for rain surfaces were recorded around Los Angeles neighborhoods during the early months of 2019, when we were getting a lot more rain than usual. Not only did it help Los Angeles recover from a major drought but the timing worked perfectly with our audio production schedule.

Beau Anthony Jimenez, Sound Designer at Naughty Dog shares some of the creative processes explored to create the sound for the game

What’s your workflow for designing environments and environmental sounds? Do you start in a DAW (Pro Tools, Reaper, or Nuendo) first to create ambience layers and individual assets and then implement those into the ND game engine?

JM: The workflow to design the ambience was fairly straightforward. It began with laying out environmental audio regions in our level editor to match the level and art layout from the Design and Background teams.

Once we have the regions set up for an area, we can start to adjust parameters of the individual spaces, connect the spaces together, and assign reverbs and reflectivity on a region-by-region basis.

…a majority of our environmental audio is composed using hundreds of overlapping emitters per area, the only way to hear the ambience of an area is to move around inside of it.

The asset creation process would primarily occur in “real-time,” while I flew around an environment with a free cam. Because our ambi beds are intentionally sparse and a majority of our environmental audio is composed using hundreds of overlapping emitters per area, the only way to hear the ambience of an area is to move around inside of it.

While I’m moving around a space, I will create new elements within my DAW as needed and then export/implement them into the levels as I go. I tended to start areas very generally with broad strokes — using beds, collapsing quads, and splines — working my way towards specific emitters and physicalized/animated elements as I drilled down on an area.

While working, I would A/B against a few important references — The Last of Us, No Country, and The Revenant — to help ensure consistency across the whole game.

Ellie has more options for traversing the landscape, like climbing, jumping over gaps, using ropes to scale vertical terrain and swing over obstacles, riding a horse, swimming…. Were these covered by the Foley team?

Neil Uchitel (NU, Senior Sound Designer): Let’s look at some of the different aspects of the Foley.

Foley and Motion Matching:

It’s impossible to talk about Foley in The Last of Us Part II without discussing the implications of using a motion-matching animation system in a video game. Originally, our plan was to simply retune the existing hands/feet Foley system from Uncharted 4, but once we started to see what the Animation department was doing with motion matching, we realized that system wouldn’t be able to account for the staggering variety of traversal possibilities, and the analog nature of it.

Just getting Foley to work at all in a motion matching system was its own challenge, as anywhere between 1 to 6 animations are simultaneously playing on every game frame.

Just getting Foley to work at all in a motion matching system was its own challenge, as anywhere between 1 to 6 animations are simultaneously playing on every game frame. Every previous modern game at Naughty Dog employed a sophisticated set of blends, and Foley sounds were simply played from whatever animation was playing at the time. It was a great system that, even on The Last of Us, still looks and sounds amazing.

In a motion matching system, however, each game frame is not only a blend; it’s numerous animations playing simultaneously, but the image on any given frame is auto-generated from what is essentially a 4D algorithm of potential choices. Meaning, there might not even exist an animation to play a sound tag from. There is a lot to say about Foley and motion matching, which could take an entirely separate article.

Suffice it to say, the Foley system needed a far more substantial upgrade than what we anticipated, and the evolution of which was nearly continuous until the end of development.

We are very fastidious about keeping a recognizable and coherent overall sonic signature for each of our games. Additionally, it is very important for us to establish the sound of the Foley and develop the system as soon as we can, as we believe that Foley, uniquely, invisibly, and intimately connects the player to the character, and thus to the story. The sound recedes and the narrative has a chance to succeed or fail on its own without distraction. To us, Foley is the ground floor of player immersion in a game like The Last of Us Part II. But given that the studio was simultaneously implementing a new animation system, this proved to be one of the biggest challenges in the Foley system. It was possible that the game as a whole could fail if the player was inhibited from connecting to the character through a poorly or partially implemented system.

Foley Production:

I supervised all the systemic Foley sessions on the Foley stage at Paramount Pictures. I spent over 40 working days in early 2019 (approx. 2 months) working with artists Dawn Lunsford, Alicia Stevenson, and mixer David Jobe — three extraordinarily talented people with seemingly endless energy, humility, and willingness to try every possible path to make each surface sound unique and enjoyable. Shannon Potter, Supervising Sound Editor at Formosa Interactive, joined me by producing the sessions and acting as a second set of ears. Recording Foley requires very active, intense listening focus every second to maintain consistent surface texture and performance continuity.

Once these sessions were complete and the palette created, the IGC Foley sessions began, which Shannon supervised without me. We originally planned to record two to three different shoe types, but due to memory constraints, we could only afford one shoe type: boots.

Recording Foley requires very active, intense listening focus every second to maintain consistent surface texture and performance continuity.

We then employed SCREAM’s artistic EQ filters, in addition to sweeteners, to create the sound for Ellie’s sneakers. Not only did we get “two for the price of one,” but doing so also pared down our Foley budget significantly, in terms of stage time and subsequent editing labor.

In addition to shoes, we recorded five full surface archetypes for bare feet for the Infected and three partial surface sets for socks.

Complex surfaces could take over a day to record; the simpler ones could take as little as a third of a day. Generally we would record 50 to 60 assets per locomotion tag — up to 16 different tags, for three movesets (standing, crouch, and prone) with a large set of special recordings just for prone. That allowed us to have 30 initial selects, in order to implement eight to 15 discreet assets in the game and enough alts in the event any of them stood out too much.

Pro Tools sessions were nearly 10 hours long, so I edited a few template sessions, as well as a host of other smaller or unique Foley sessions. I then passed them off to our two external contract Foley editors, Eolyne Arnold and Matt Telsey, with whom I worked closely to guide the editorial aesthetic of this game. Editorial took approximately six months.

Soundworks Collection has released a great interview with the audio team for the game – check it out below:

In the end, 40 distinct surface types ended up in the game. Each comprised of anywhere from 1,000 to 2,000 assets. Horse hooves and dog feet were also recorded for six archetypal base surfaces. By way of comparison, our Uncharted 4 Foley banks were anywhere from 300 to 400 sound IDs, and the banks in The Last of Us Part II were anywhere from 1,500 to 1,800 sound IDs. By every measure the scale of the Foley work was unparalleled in Naughty Dog’s history.

Surface probes are now multi-sampling, so, for example, the sound of stepping on broken glass changes depending upon how much glass is sampled underfoot.

Some special details of note: stepping in blood puddles plays special “sticky” sounds when you walk in them. And when you walk out, you leave footprints that are accompanied by progressively diminishing sticky footstep sounds. Surface probes are now multi-sampling, so, for example, the sound of stepping on broken glass changes depending upon how much glass is sampled underfoot. Finally, we implemented surface blending and additives, so when you step on a surface that is a blend between two art layers, it not only plays a blended sound, but an additional surface (e.g, glass or moss) can play on top of that blend. Feet and hands surface probe independently, but we couldn’t implement shoe on/off on the same character, due to in-game memory constraints.

I recorded water myself separately, along with Sam St. Clair, our audio implementer. I love the sound of water, and so over several games I’ve tried to push the level of detail in our water systems. I think water is often given short shrift in games as it is, understandably, very complex and expensive to implement successfully. So, for the first time in our games, water is “depth-aware.” There are six different depths of water footsteps, as well as splashes for both the player character and NPCs, including horses and dogs. This also includes variations for both foreground and background water — everything from slightly wet surfaces to full swimming — with shared assets across multiple systems. One of the little subtleties we added is that when the player crosses water that is at least ankle height, when you come out, wet socks play for a little while until they visually “dry out.”

Since the first part of the game occurs in snow, I really wanted to make sure that our snow sounded great, so as to set proper expectations for the rest of the game. Red Dead Redemption 2 was always in my mind when I was thinking about how I wanted it to sound. Over one holiday break, I recorded full locomotion sets of deep snow and thin, crunchy snow. Working with technical artist Steven Tang, we also created an in-between surface representing “packed” snow, that played when walking over snow that had been previously visually deformed.

Foley and Prone:

By far the biggest addition to the character Foley system was the inclusion of “prone” as a moveset. It comprised about half of the sound IDs in a surface bank. Prone animations were being continuously iterated upon by the Animation department, and some aspects of the moveset are entirely analog. Meaning, character speed is entirely dependent upon how far you move the stick.

This meant that, for some of the prone traversal sets, we couldn’t use discreet intensity bucketed assets as we often do for feet and hands, since the transition between buckets would be clearly heard. I initially experimented with a few carefully designed loops, which later expanded and with additional tech implemented by both Jonathan Lanier, our audio programmer, and several other members of the programming team, with whom I worked intensely and continuously throughout development. This allowed us to create a nearly entirely analog Foley system for prone (hands, arms, feet, and leg drags). Loops turned out to be quite a useful method to create Foley sounds, especially when modulated by ADSR blooms scripts, some of which were further modulated by gameplay variables. We also had substantial in-game memory constraints that had not changed since Uncharted 4. Using loops was a judicious way to get nearly infinite variation with a minimal memory footprint.

Using loops was a judicious way to get nearly infinite variation with a minimal memory footprint.

The addition of prone character Foley then exposed the need to make the weapons and objects the player can hold in-hand surface-aware. It wouldn’t suffice if weapons held while crawling on the ground simply played a generic sound, so each of the five primary weapon types will play the proper surface sound when in prone, as well as regular traversal over barriers — vaulting, clambering, etc.

The 13 Melee weapon types, as well as the six throwable object types, cannot be carried in prone, but are also surface-aware in regular traversal. The game also detects whether the player is wearing gloves on any hand and plays a “gloved” sound for that hand, which is also surface-aware. Personally, surface-aware weapon effect sounds are some of my favorite subtle touches in the Foley system.

Foley and the Backpack:

The Character department implemented a new “soft-body” physics-based backpack for the player, for a more natural motion than found in The Last of Us. To compound the complexity, there were four full motion-matching traversal animation sets based upon tension state in the game. Additionally, the backpack sits on the player differently and changes its movement based upon those tension states. Given that the player faces the character backpack the entirety of the game with three different player backpack types, this also needed a new system to mimic the analog nature of the backpack movement.

I enlisted another gameplay programmer, Ryan Huai, to help create a system that would know what weapons were externally holstered, place them in a priority list, and play Foley sounds based upon that priority.

Working with gameplay programmer Ryan Broner, I was able to implement an almost entirely analog system for playing backpack sounds to both match the motion of the player and the tension state of the game. The backpack on/off sounds are also surface-aware, so it will play the appropriate surface type when it hits the ground or water.

Implementing that system then exposed another “blank spot,” which was what to do about holstered weapons on the back? Using the system I had created for the backpack, I enlisted another gameplay programmer, Ryan Huai, to help create a system that would know what weapons were externally holstered, place them in a priority list, and play Foley sounds based upon that priority. Michael Marchisotto, who worked on all the Melee sounds in the game, recorded and provided special assets for the system.

Due to a variety of in-game resource limits, we ended up being able to play one of three different primary weapons (bow, long gun/shotgun, and flamethrower), one of seven different Melee weapon types, and arrows in groups of one, two, or more than three arrows. All working perfectly in sync with, but independent of, whatever backpack type is on the player.

Foley and Tools:

The Foley system in The Last of Us Part II — which is really a system of systems, as is game audio as a whole — stretches every resource in our proprietary branch of SCREAM, particularly in terms of processing expense, real-time variable input, and in-game memory management. SCREAM is an extraordinary tool that allows us to have a level of control and engagement with the engine that is unparalleled in audio middleware. A lot of significant and amazing upgrades were made to SCREAM specifically for this game and, without the work of Jonathan Lanier, our audio programmer, and the Tools and Tech programmers at Sony who maintain the tool, very little of this would have been possible to do in the time we had. In many levels, more than half the in-game memory used is for Foley and memory management was a constant struggle.

Sonically, what are the differences between the Washington Liberation Front and the Scars (or Seraphites)?

Maged Khalil Ragab (MKR, Dialogue Supervisor): The WLF and Seraphites have very different philosophies. With that said, the Seraphites are everything the WLF are not. The Seraphites are a more minimalist group and operate based on the necessities of their community. This makes them more emotionally reserved, controlled, and far more disciplined than their counterparts.

The WLF are a highly trained militant group that are far more secular and enjoy what color in life they can find in such a gray world. Though their fight is meaningful to them, it is a job that allows them to have a home and a sense of belonging.

When directing our actors for these roles, we focused on the Seraphites’ cultural background and kept their emotions reserved. They’re a quiet people, hence the hushed nature of their timbre. The only time they express emotion is out of concern for their allies and venom for their enemies. For example, if they see an ally get killed, you’ll then hear that crack in the emotionally stoic armor that the Seraphites are known for.

The WLF, however, are far more emotive in just about every dialogue category we have. They are relatable in their expressions of fear, anxiety, and concern.

The starkness in the two factions required us to have reference performances on the ready for playback for our actors to make sure they were in alignment with their projection, and emotion — or lack thereof, relative to the Seraphites. That was probably the most challenging part.

We researched hunter whistles, echo calls from places like Egypt during their recent revolution, and the one that was most impactful: a documentary about an island from Spain, which has a real-life whistle language.

Innately, our cast of Seraphites would want to emote for very specific performances as we went deeper after dialing, and even for ourselves in the control room there was much debate and scrutiny about if we were staying in alignment with the foundation we’d laid. It was a challenging and rewarding journey that couldn’t have been done without our actors’ dedication to the characters.

Then, of course, there’s the Seraphites’ whistle language. We knew that we wanted to add an ambiguous means of communication that reflected the Seraphite lifestyle. We researched hunter whistles, echo calls from places like Egypt during their recent revolution, and the one that was most impactful: a documentary about an island from Spain, which has a real-life whistle language.

A documentary on the whistled language of the island of La Gomera (Canary Islands), the Silbo Gomero

Initially, we cast a very talented musical whistler, whose performances blended in too much into our environment and sounded identical to bird calls. We discovered this around the time of our E3 gameplay reveal and we had to work fast to come up with something that aligned closer to our vision.

We ended up recording myself and our Lead UI Designer, Maria Capel, whose grandfather coincidentally is from that very island in Spain. From there, our Dialogue Coordinator, Grayson Stone, edited together a series of whistle variations that we based our language on. Each whistle represents a proper dialogue call-and-response of strategy with our dialogue system — whether it be on a simple patrol check-in, identifying line of sight of their enemy, or the echoing alarm of finding one of their own who’s been killed. The latter is very much like the lighting of torches from one mountain top to the next in order to propagate knowledge of a nearby threat.

We then cast two new professional whistlers to not only mimic the exactness of our designed whistles, but to do it in stages of degradation. It wasn’t realistic to us that every Seraphite was the perfect whistler, so we made four tiers: 1. the perfect whistler (the ones we designed), 2. the exact whistler (a mimic of our design), 3. the average whistler (slight variance in cadence and pitch), and 4. the less than average whistler (wind and pitch breaks).

These layers of variety gave a deep sense of and a slight misdirect to the whistle patterns and what they mean. We decided to also record our whistles using a hyper-cardioid shotgun mic as our main with lavaliers as our back up. This gave use the directionality we needed for the whistles to cut through faster and drier than using an omni-pickup pattern from our lavs.

Recording all 16 of our enemy actors of these factions is standardized across the board. Two lavaliers on motion capture caps just above each eyebrow (one main and one backup) — the same exact mic-pres and AD conversion through the 3.5 to 4 years of recording our talent.

This setup is a mirror from our motion capture stage so that our in-game dialogue closely matched our cinematics. Additionally, we recorded in the same room at Formosa Interactive for this group and the majority of our cast, for that matter. This provided the consistency we needed in order to be able to create a system that supported material stretching over several years, with sound organic and present to the moment in our game.

A part of this is attributed to the fact that we recorded with the same pair of actors standing in the same room position for every session. Prior to recording in any room, I would go to the extent of standing in each position clapping and reciting different vowels in various directions in order to understand the room modes that the studio had. This greatly informed us of the acoustic variances we might encounter during recording. Plus, we had our wonderful engineers informing the actors if they weren’t projecting certain lines in a specific direction due to it being more reflective when coming from that particular voice.

Mastering is a special cocktail of sorts. Each one of our actors have the same mastering chain with custom frequency-dependent settings per voice. The things that we wanted to keep the same are the things that should be close to identical across the board, which are the dynamics. Compression settings do not change, however, dynamics like de-essing, frequency-dependent dynamics control, and standard multiband compressor (when needed), are the things that are customized to each voice and sometimes per-session. Mics, pres, and the human voice will sound different from day-to-day, which is usually addressed as we vet our takes or in the polish phase in post-production. The trick behind not adjusting dynamics is really in leveling your mastered content that feeds the dynamic processing. This is the most handcrafted process of our sound. Each line is leveled by hand to manage each peak and/or syllable when needed. It also gives the results of riding the fader on a mixing console. If we have a dynamic performance, from spoken and up to yelling, we can manage that in a way that still hits the level jump expected without the quieter portion getting lost.

[tweet_box]How The Last of Us Part II’s incredible sound was made:[/tweet_box]

Mike Hourihan (MH, Supervising Dialogue Designer): We created a robust suite of dialogue for both groups so they would feel like full-fledged characters with emotions and connections to each other, but we also wanted to create a stark contrast between them.

The WLF are much more vocal and expressive in their dialog. They coordinate and communicate their tactics verbally. They also progressively reveal emotion and frustration through changes in their dialogue when the odds are against them.

The Seraphites use a whistle language to obscure their tactics, which make them much harder to read in battle and creates an ominousness and feeling that they are a coordinated “hive-mind.” This whistle language allows them to convey the same amount of tactical information with each other as the WLF, but it is encrypted to the player, until you start to pick up on those patterns.

Grayson Stone (GS, Dialogue Coordinator): The most immediate and obvious sonic difference between the two enemy factions is their communication before they’re alerted to the player’s presence. The WLF are a trained paramilitary group, calling out to each other and announcing their intentions: checking by a car, or in a house, etc., so that their allies know where they are.

The Seraphites, on the other hand, are initially more of a covert team, using an encrypted language in order to transmit only the most necessary of information. Due to some of the whistles covering a more broad idea of information, they’re used to communicating less frequently, which adds an air of mystery and silence to their movements. The whistle language itself is built around whistle “phonemes” that carry particular weight or emotion behind them. While the player may not initially know exactly what each whistle means, they’ll be able to understand the emotion behind them.

For the sound of the Infected, how did it evolve for The Last of Us Part II? Also, what are some new stages of infected ‘creatures’ and what went into their sounds?

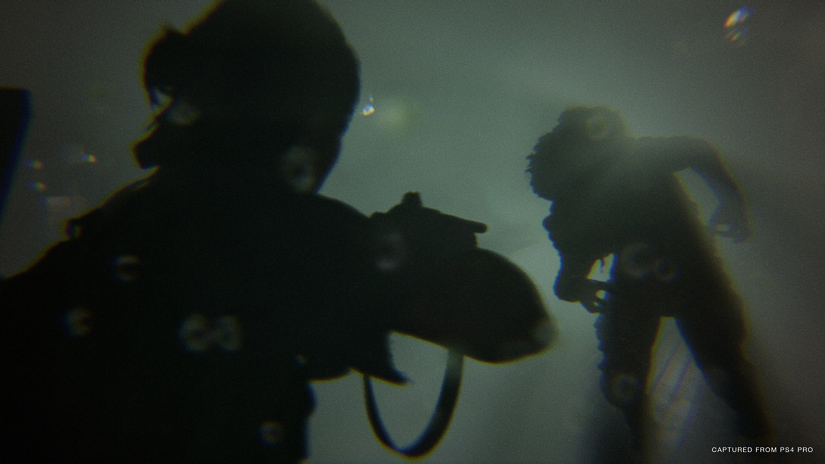

Beau Jimenez (BJ, Sound Designer): The goal for Infected sound design in this game was to cement the Infected as entities that were once human, as is the fiction of this universe. We wanted the players to hear the pain and misery in their vocals; hear them torn between their human intuition and the Cordyceps fungus’ influence.

As the Infected stages progress, their human cognition regresses, meaning you’ll hear less strain in the vocals as the Infected goes from Runner, to Stalker, to Clicker, etc. Sound is also reflective of the Infected’s visual look, in that the more the Cordyceps visually overwhelms the body, the more it overwhelms the natural human voice, which is why the Shambler, Clicker, and Bloater sound much less human than the Runners.

Every Infected class and all their character variations have been re-sound designed from the ground up. This includes all new recorded source material and completely new sound design. The implementation pipelines have been completely re-factored as well.

The first stage of the Infection, the Runners, needed to have the human vocals as raw as possible with a focus on the actor’s performance. Rob and I directed the Runner voice actors to sound as if there was a conflict heard in the vocal performances: a struggle to break free from the excruciating pain as the Infection takes over motor-skills, a sadness knowing that they have lost everything and can’t overcome the Infection, and a horror as they act out appalling actions without control. No layering was done with this class: just simple editing!

…the more the Cordyceps visually overwhelms the body, the more it overwhelms the natural human voice, which is why the Shambler, Clicker, and Bloater sound much less human than the Runners.

The Stalkers were an interesting task in this game, as their AI, design, animation, and character models have been completely re-conceptualized and, of course, redone for the sequel. They’re the only Infected type that run away from fights. They hide and reposition, while running on all fours, waiting for the opportune time to flank and get the upper hand on their prey.

Sound was crucial in this design, as they needed to be silent after running away to create a tension in the gameplay. Making their location unknown to the player and not visible in Listen Mode was the big design challenge that sound needed to support.

Sound design-wise, I wanted them to feel like they resided in a sweet-spot between Runners and Clickers, so the idea that they produce prepubescent clicks with sporadic, shrieking inhales was born. The fiction being that the spores have started to overwhelm their air passages causing them to choke, snort, and shriek.

The Clickers stayed true to the original concepts, as they were the staple Infected class from the first game. One giant advancement is that all of their associated sound lives in-memory instead of being streamed from the hard-drive. This benefited us, because we were able to tag animations with what we called ‘jolts,’ which are fast vocal elements or clicks that are tagged on the frame of an animation that amplifies a jarring head turn or body contort. This made them feel more reactive and threatening.

Also, the clicks were able to drive the Listen Mode lighting in real-time as well as lip-flap, which looks amazing! So, when you play the game, take note of the reactive jolts on certain frames of the systemic locomotion.

In terms of sound design, we relied heavily on our talented creature vocalists, many of which returned from the first game. It was, of course, embellished with various source recordings, like a phlegmy gag I can do after a couple Famous Amos bite-sized cookies, which worked perfectly for the frenzy attack!

Highlights from A Sound Effect - article continues below:

The Bloater’s sound evolved significantly in this project. Our goal was to lean its vocals more human-like than the first game. Moments like the Bloater charging at the player should send you into fight-or-flight mode. Hearing it bellow in pain as it tears pustules from its own flesh should resonate and terrify. Every vocal was designed to enforce that the Bloater is simply a human encrusted in years of fungi.

In terms of recording, we had two fabulous voice actors on the Bloater. One was used for all the roars, hits, swings, bellows, and yells, while the other could do the most heinous grumbling and fumbling murmurs that solidified the Bloater and paired perfectly with the content from the other actor.

The Bloater was also an interesting challenge because he could cause environmental damage. They can charge though or break down various walls in combat set-ups, so all of those kinetic wall-breaks needed to sound vicious to get the point across: keep your distance from this beast!

The Shambler was near and dear to me on this project, as it was the newest Infected addition to the The Last of Us universe that I was able to design with no preconceived notion of their sound. My early prototypes sounded like a gross, sopping-wet, wheezing old man from hell, and to be frank, the final product stayed true to that description! The only addition was the layered vocals from our talented voice actors. These vocals grounded the character and made its humanity more believable.

We also performed with a bellows filled with milk-soaked oatmeal which created some gross (aka awesome) source material and helped bring the pustule visuals to life.

The elements included a host of bizarre wet, mouth sounds recorded with a Sanken CO-100K and slowed down in post, a lot of wheezy content from my wheezy, ‘old man voice,’ various gore recordings, and the source from our Shambler voice actors.

The high-level concept for the Shambler’s vocal design is that, in lower tension states, like “unaware,” they sound more relaxed, which results in your hearing the air pass through their sickly, festering respiratory system. In higher tension states, like combat, the vocals begin to poke out more as it becomes more agitated.

Some fun recording tidbits: the Shambler clicks were created by sticking the Sanken into a plastic bucket, and performing palatal click sounds though a vinyl tube into the bucket. For the Shambler pustule explosion, we recorded a life vest getting inflated with a CO2 cylinder, which created a beautifully aggressive airy burst sound. We also performed with a bellows filled with milk-soaked oatmeal which created some gross (aka awesome) source material and helped bring the pustule visuals to life.

Overall, we stayed true to the sonic vision of the first title, while trying to improve technical and design aspects. I hope all the months of hard work came across!

Were there any other established sounds from the first game that you were able to reuse for Part II? How did you have to modify those sounds to make them fit?

RK: There are a few sounds related to The Last of Us that I consider iconic and part of the sonic signature of that game. The drone that plays when you are about to be spotted as well as the “gong” that plays when you’re spotted are both made from the same elements as the first game.

Originally, both elements were stereo. For The Last of Us Part II, Beau Jimenez took those elements and made them wider and slightly bigger. The drone became quad and the “gong” became a 5.1 sound. The feeling is identical to the first game, but they are slightly higher fidelity and work better in the mix of The Last of Us Part II.

Another small touch is that Joel’s shotgun and revolver gunshots were designed utilizing the original assets. They were modified slightly and layered with some new material and the implementation was updated to match the system for gun fire in The Last of Us Part II. I thought it was important that Joel’s weapons pay homage to Phil Kovats’ awesome work with the guns for The Last of Us.

What was the most challenging aspect of Part II to design? What went into it?

RK: I wouldn’t say that there was any one sound that was more challenging than any other, from a design standpoint.

The challenging thing on this project was the sheer scope of the game. It can be upwards of a 30-hour experience, depending on play style. At the level of detail we were trying to achieve, the challenge became getting absolutely everything covered at as high a quality as possible in the time that we had. It’s the biggest game that Naughty Dog has ever made by a wide margin. It would not have been possible without the incredible hard work from the amazing group of people that made up our audio team.

What were some challenges in mixing this game?

RK: Mixing a game like The Last of Us Part II is fairly unique in video games. We shoot for a very high dynamic range mix and it’s very difficult to balance that with the need to make sure the player is always hearing story-critical dialogue.

The levels in The Last of Us Part II are much bigger than pretty much any of our games, so trying to keep the dialogue intelligible, while also honoring the world and making it sound believably obstructed or occluded depending on what the player is doing, was very difficult. There is no perfect setting or solution due to the player having 100% agency during most of the game.

Another area of the mix that required a lot of focus were the transitions between gameplay and cinematics, which have a more traditional linear mix. The goal was to make it completely seamless so that the player never notices the switch.

We utilized many techniques to achieve these transitions. First, our ambience plays through from the game, while the cinematic mix plays on top of it. This ensures that there is no bump when the transition happens.

…there are a few scenes where it sends gameplay dialogue through the real-time convolver futz into the 7.1 cinematic mix and then back to gameplay again and you can’t notice the switch.

Likewise, the music is also not a part of the 7.1 channel mix, but instead played separately so that it can both pre and post lap the cinematic where necessary.

We also share our impulse responses with Formosa, who are responsible for our cinematic mixes, which ensures that reverbs are identical between gameplay and cutscenes. We got a very cool piece of tech on this game that allowed for us to use the same impulse response and filtering for things like gasmask futzing. The settings match 100% identically and there are a few scenes where it sends gameplay dialogue through the real-time convolver futz into the 7.1 cinematic mix and then back to gameplay again and you can’t notice the switch. It made our futzing sound so much better on this game, because in the past we were very limited on what we could use for real-time futzing.

By and large, I think it was a pretty successful effort and it’s something that I am personally very proud of.

Want to learn even more about what went into designing the sound for The Last of Us Part II? Check out this series of tweets by Beau Anthony Jimenez where he shares additional details on how the sounds of the Shambler were made – and here he gives you a game audio deep-dive on the breathing system in the game. In this series of tweets, here, Jesse James Garcia shares lots of details on what went into creating the sounds of breaking glass.

What format did you mix in, and how did you take advantage of the surround environment to help players identify threats?

RK: We principally mix in 7.1, but we also support Mono, Stereo and 3D audio by the way of the Sony Platinum Headset.

Sound placement is critical for tactical feedback in The Last of Us Part II. It can tell you where an enemy is, or how far away something is, all just with sound. The audio engine that Jonathan Lanier has created works so that the surround channels are equal to the front channels. Assuming you have a correctly tuned setup at home, there should be smooth panning and imaging throughout the surround field.

Sounds need to emit from where they would be realistically in the world. If it’s the sound of a bird in the ambience, it needs to come from a tree or atop a telephone pole.

Over the last 10 years, the audio implementation philosophy at Naughty Dog has been consistent. Sounds need to emit from where they would be realistically in the world. If it’s the sound of a bird in the ambience, it needs to come from a tree or atop a telephone pole. If it’s the enemy footsteps, they actually play from their feet. I could go on, but all that means is that we have been thinking about and working with 3D audio for a long time. It translates extremely well not only to our 7.1 mix, but also to our TV and Headphone mixes as well.

The added benefit of working this way means that it also translates well to the 3D mix for the PlayStation Platinum Headset. That 3D mix takes the elevation information that was already there from the sound engine and translates it to height both above and below the listener.

Can you talk about your process of developing the blind accessibility features (through user testing, etc.) and what core concepts you were able to implement? I heard the game can be completely played through sound. That’s amazing! Can you talk about your use of text-to-speech and other methods of detailed spatial awareness?

JM: We’re very excited to share our accessibility features with everyone, and even though we did a bunch of in-house focus testing with our accessibility consultants, we are always interested in finding new and better ways to make these user-facing tools even more empowering.

The core of the Audio Cue system is an association of easily identifiable sounds with the commonly used actions in the game.

Accessibility Audio Cues in The Last of Us Part II have been designed to work alongside the game’s audio and bring additional gameplay information into the auditory realm to assist players in all aspects of the game, from basic navigation to gameplay interactions, scavenging, and combat. The core of the Audio Cue system is an association of easily identifiable sounds with the commonly used actions in the game. These core action sounds are then augmented with extra sweetener sounds to give more context to the action the player has available. As the player moves around the world using audible navigation assistance, they will hear interactive Audio Cues come into range indicating possible interactions, pickups, or story elements. A sonar is also provided to audibly locate item pickups and enemies in the vicinity, and when combat occurs, it can be managed using real-time feedback for grabbing enemies, scavenging ammo, ranged combat feedback, or letting you know when to dodge an incoming melee attack.

In terms of sound, what are you most proud of on The Last of Us Part II?

RK: I’m going to cheat this question a little bit. I’m extraordinarily proud of everything this team was able to accomplish in this game. From the dialogue to the sound effects, music, and localization — everything just feels right. I think we honored the legacy of the first game, as well as forged a new path of our own. I personally think it is one of the best sounding games we have ever made.

That all being said, the thing that I am truly most proud of is the team that we were able to put together to make this thing. The folks on this team are incredibly hard-working but more than that they are just great people. This is as tight-knit a team as any I have ever seen and I am extremely proud and honored to have led them. Any praise that comes our way is a credit to them and their incredible work.

I need to shout out these wonderful people — Audio Programming: Jonathan Lanier. Dialogue: Maged Khalil Ragab, Mike Hourihan, Emily Scrivner, Grayson Stone, Sean LaValle, Julius Kukla, Thomas Barrett, Jaime Marcelo and Erik Schmall. Sound Design: Neil Uchitel, Beau Jimenez, Justin Mullens, Sam St. Clair, Jesse Garcia, Michael Marchisotto, Jordan Denton, Derek Brown, Chris Walasek, Eolyne Arnold, Matt Telesey, our partners at Formosa Interactive, including Shannon Potter, Chad Bedell, et al. Localization: Kurt Mendoza and Caroline Lavaroni, as well as our partners at both SIEE and Formosa Interactive. Music: Scott Hanau, Rob Goodson, Scott Shoemaker, et al at Sony PD Music. Naughty Dog QA Audio: Jessie Chang, Sean Petersen, Philip Meneses, and Michael Gevorkyan.

A big thanks to Rob Krekel and the Naughty Dog sound team for giving us a behind-the-scenes look at the sound of The Last of Us Part II and to Jennifer Walden for the interview!