Did you know there’s a playable piano in Half-Life: Alyx? And there are videos on YouTube of players plinking out songs on it? Search “half life alyx piano” and prepare to be amazed.

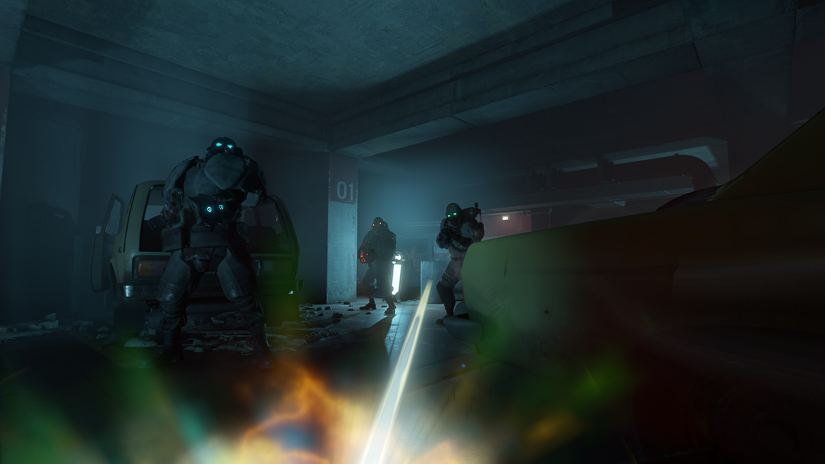

That detail is just one of the many lovingly crafted sonic joys that live in this virtual world. According to sound designer Roland Shaw, when Valve’s first VR experience was created they found that players were interested in interacting with objects in the environment and so they wanted to seriously expand on that for Half-Life: Alyx. The spaces are alive with light buzzes and water drips. If you drop a glass bottle, it rewards you with a lovely smashing sound. There are layers upon layers of sonic details to satisfy both the quest-oriented players driven to beat the game quickly and the explorer-players who want to turn over every leaf in the game.

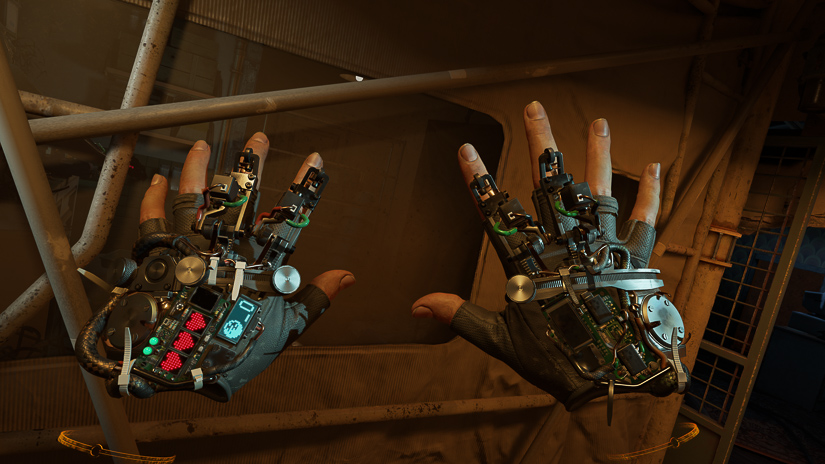

Here, Valve sound designers Roland Shaw, Dave Feise, Kelly Thornton and composer Mike Morasky talk about designing sound for a virtual space, their approach to Half-Life‘s unique creatures and weapons, creating the Gravity Gloves, using Foley to make the NPCs feel real and to sell the player’s physical presence in the space (because a player only see their hands), designing proprietary sound tools for the Source 2 engine, and more!

Half-Life: Alyx Announcement Trailer

This game is set between the events of Half-Life and Half-Life 2. Were there sonic elements or assets you could bring into Half-Life: Alyx from either of those games? If so, what were some challenges in getting those assets to fit into this game?

Dave Feise (DF): There definitely are sounds from the earlier games in Half-Life: Alyx. I think it’s a tricky topic because on the one hand a lot of those sounds are iconic and players feel a sense of nostalgia about them, but on the other hand some of them sound quite dated and in need of freshening up. I would’ve been happy if we’d decided to not use any sounds from previous games and decided instead to only use those sounds for inspiration and a jumping off point, but I think I’m in the minority there.

One of the main challenges I found was getting the older sounds to fit into the world well. HRTF processing relies on filtering frequencies to simulate our head and ears interfering with sounds in real life, but a lot of the older Half-Life sounds are quite simple in their frequency content so HRTF doesn’t work as well on them and they end up sounding kind of non-positional. Several of the older sounds have been dirtied-up a little bit just to give them a bit more frequency content in order to spatialize better with HRTF.

Half-Life: Alyx is Valve’s ‘flagship’ VR game. What were some hurdles — creatively and technically — you faced in designing and implementing sound for this VR game?

Roland Shaw (RS): Two things come to mind that, based on testing, I would suggest need a little more attention in VR — scale and detail.

The scale of everything is right there in front of you; there is much less of an abstraction of it via a screen. We identified the implications of that years ago when working on a product with a similar level of realism in Aperture Robot Repair. GLaDOS went from a 2D object that (in her non-potato form) simply took up some generous screen real estate to a 3D monstrosity of huge metal panels and thick cables with almost tangible weight and power, especially when animated. We had to meet that, and found testers responded well when sound matched scale in a consistent manner across elements. This helped immersion, but our ability to push the idea of weight and size as a creative tool for emotive effect was somewhat diminished. This turned out not to be an issue as the trade off was worth it, there are plenty of tools left in the Sound Design box to achieve multiple goals, and workflow-wise it is just a slightly different set of considerations to pivot to.

…we saw testers trying to pick up and play with everything…

Regarding detail, again in Aperture Robot Repair, we saw testers trying to pick up and play with everything, and stick their head into animated objects expecting to hear suitable feedback and intricate details. For Half-Life: Alyx, we set out to meet and ideally exceed those expectations. So picking up most props and moving them will result in relevant audio feedback — aerosol cans rattling, matches in matchboxes, ammo in clips, and hundreds of prop details. The environments are literally covered in layers of tight falloff details like lights humming and water pipes dripping. Compared to regular games, the creatures in Half-Life: Alyx appear to have a new level of being; they seem more like a living thing in your direct vicinity. To meet that, they have breathing sounds when they’re not otherwise vocalizing, and multiple levels of sonic detail in their movements that are revealed as they approach. Many of these elements are subtle by design so as to not detract from gameplay feedback sounds, but build together to create a rich interactive soundtrack.

[tweet_box]Designing Half-Life: Alyx’s Superb VR Sound[/tweet_box]

So picking up most props and moving them will result in relevant audio feedback — aerosol cans rattling, matches in matchboxes, ammo in clips, and hundreds of prop details.

DF: To expand on Roland’s answer slightly, I think in VR the scale can be played with a little bit by the player. When you get close to something in-game in a way you’re sort of zooming into it rather than getting closer, and the effect is so much more pronounced than in a flat-screen game that I think it warrants rethinking the way that even small objects sound when you’re really up close to them.

What were some things to consider in terms of pace, intensity, and proximity when designing sounds for such an immersive experience?

RS: Dave certainly put a lot of effort into creating detailed and interesting soundscapes given that we saw people taking their time to explore spaces. We were also experimenting with various falloff and panning curves right up until the end; I think Emily has a great ear for those.

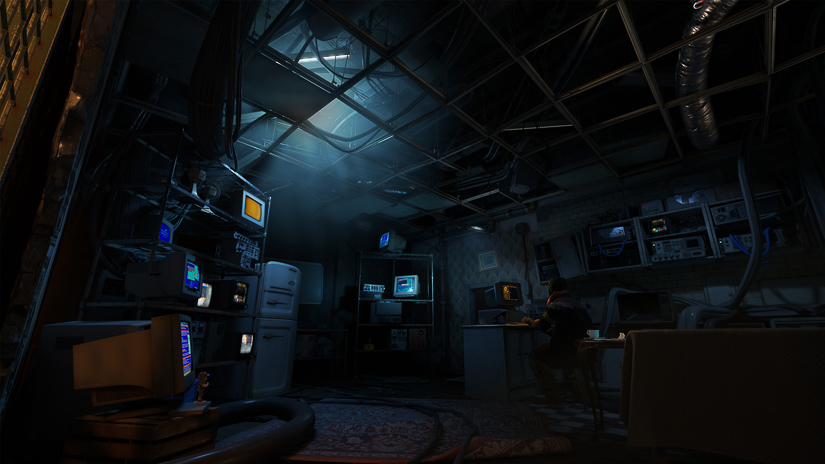

DF: One of the things we noticed really early on in playtests was how much slower most players were in VR compared to WASD. Areas that players would blitz through in an ordinary game suddenly became interesting corners to explore in VR. It was really important to me that those players would be sonically rewarded for taking their time, so to that end, there is a lot of layering and detail in the ambient sounds that might not otherwise be there.

How were you able to use sound to help the player navigate this world? To identify threats and where they’re coming from? To effectively interact with objects in the environment?

Mike Morasky (MM): On a slightly bigger scope, early on we were having a hard time keeping the concept of a “quest” fresh in the player’s mind without hammering them with dialogue over and over. We already had some test musical sound effects happening at the mid-game climax. We ended up making it loud and directional such that as you approach the “Northern Star” you can hear this distant, occasionally repeating sound coming from the direction you’re headed. Once it’s revealed what that sound is, it continues to play, occluded to differing degrees but still from the direction of your destination. While you make the multi-hour journey through the hotel you’re always aware of the sound of your goal and where it is in relation to where you’re going. We also use a version of this concept later in the game as well but to lesser effect.

Was Foley a more valuable sonic element in Alyx than in Half-Life or Half-Life 2? Why or why not?

RS: Yes, for both the NPCs and the player. Related to my comments on detail above, the presence provided by intimate Foley was a key element in increasing the believability of NPCs and added to the drama of being close to enemies.

For the player Foley, selling the idea that players had a physical presence in City 17 seemed a no-brainer for increasing immersion, and started with some sound scripts audibly reacting to arm and body movement. Much like in linear media, this layer of content is mostly subtle, but without it something just seems missing. Once that was working and footsteps were covered, testers mentioned wanting to hear a louder sound when dropping down and landing. This told us that they were sold on the idea that they had a physical presence in our virtual world, and we happily obliged.

What went into creating the sound of the Gravity Gloves? (The player touches a lot of things in this game. The list of ‘grab’ sounds must have been massive!)

DF: The sounds the gloves themselves make consist of several different parts: there’s a loop that plays from the hand, and “rollover” that plays from the object you’re pointing at. Additionally, we continuously spawn looping sounds that fly from your hand to the object to give some sense of motion to the beam. When you close your hand there’s a ping sound that plays from the object, and then another that plays from the hand when you flick your wrist to bring the object to you.

All synthetic sounds are a combination of the Access Virus and Serum, and there are a bunch of recorded sounds that are mostly mechanical (stuff like servos, typewriters, clocks, etc.) that are being pretty heavily effected with GRM Tools. The launch sound has a bunch of air release type samples in it (also heavily processed) to give it a satisfying *foomph*.

RS: There are only a few hand grab sounds — a deliberate decision to provide clear familiar feedback. However, when interacting with props, they’re complimented with a physics impact sound, of which there are many thousands of possible variations owing to the large number of prop specific sounds we have and the velocity driven layering employed.

‘Half-Life: Alyx’ Gameplay Video 3

What went into the weapon design? How did you create the gun sounds and how do you bring the elements together in-game?

DF: All the weapons in the game are based on layering and combining four different elements per shot: there’s “Head “Body,” “LFE,” and “Tail.” Head and body go through HRTF processing to make the guns sound positional, while LFE and tail skip HRTF to sort of smoosh the sound out a bit more. The four layers each have some number of variations (usually three to five) that get picked from when the gun fires in-game.

Head is generally a sharp transient sound, body is generally pretty punchy and full frequency, LFE is usually quite low-passed, and tail is mostly reverb and decay-type sounds. This varies somewhat from gun to gun but it’s the basic pattern I went for.

There might be exceptions, but I believe all guns have some elements that I recorded, some that are from a library, and some that are synthesized. We didn’t do a gun session for this game for two main reasons. First, there’s already a ton of great recordings out there, and second, we weren’t making a “Gun Simulator” where authenticity was important to us. Most (possibly all) of the guns use Krotos Weaponiser in some fashion, even if it’s just for sample playback. Synthetic sounds are from Serum mostly, and LFE sounds are a mixture of recordings, Kick2, and Enforcer.

What went into creating the sound of some of the creatures/enemies a player encounters?

RS: I’m glad you said creatures and not monsters. I’d spoken with animators about my intention to treat enemies like the headcrabs as creatures with the ability to be monstrous, just like many regular animals are, as opposed to just “monsters.” They liked that, probably because it is an idea I stole from Harryhausen.

For the ‘barnacle,’ gurgling custard helped blend together some heavily pitch-shifted human and camel sounds.

Kelly Thornton (KT): The bloaters used various human and animal sources. They were heavily processed to remove most of the sense of them being “of this earth.” It would be a disaster to think of a certain animal or a human when peering around the corner at a bloater, hearing it stretch its blow bags; alien believability would take a hit immediately.

Frightening, ominous, mysterious, weird with a slight hint of understandability was the goal.

We wanted them to seem otherworldly and very strange yet have a familiarity in a few key situations. An example is the swelling when you are within a certain distance of them and the pain vocalization when they take damage. They needed to sound just enough in line with what we humans understand as pain or exertion vocalization that the player understands the in-game mechanic that was happening. Lots of testing here led to the final product. Frightening, ominous, mysterious, weird with a slight hint of understandability was the goal.

RS: For the ‘headcrab,’ ‘toxic headcrab,’ and ‘headcrab zombie,’ there were plenty of pitch-shifted animals in the first two, and some talented voice actors and my less talented self in the latter. Sound Designer Michael Leaning clued me into a cool technique for making some ghastly humanoid breathing sounds that he used in his sample library “The Undead,” which was very useful. Thanks again, Michael!

For the ‘Lightning Dog,’ the mini lightning bolt sounds were lots of tightly edited zaps and pops processed with relatively complex chains including resonant filters and delay feedback. Sound Designer Mattia Celloto has a sample library called Polarity which provided wonderful source to build from.

The ‘Antlion’ was created from European Starling recordings with a Telinga Parabolic mic and processed using Max/MSP patches.

The Half-Life: Alyx team talks player locomotion in this behind-the-scenes deep dive. Valve developers Jason Mitchell, Luke Nalker, Greg Coomer, and Roland Shaw share some of our early prototypes, and walk through user interface, audio, and player movement discoveries that led to the game’s final designs:

What was your approach to the tech sounds in the game? What were some of your sources, processing, or software used to create the more sci-fi sounds?

DF: For the tech stuff I worked on there’s a mix of source that I’ve recorded (lots of motors and mechanical ratchet type stuff), some library sounds, and a lot of synthetic sounds and effects. Looking back at my sessions there’s a lot of Serum and Massive, and a lot of GRM tools. Enforcer features heavily too.

RS: I used Xfer Serum, Europa in Reason, modulated vocoders, stuff in my Dad’s garage, using tight edits for rhythm.

Did you do any field recording or custom recording for Alyx? If so, what did you capture and how did it fit into your sound design for the game?

DF: I didn’t do a ton of field recording for Alyx but I did some. Mostly I recorded ambient sounds like room tones, and outdoor environments. I did quite a lot of machinery recordings like fans and air ducts, and a bunch of electrical recordings of different light bulbs and light fixtures. Roland has a really cool electromagnetic pickup mic and I got a bunch of really useful stuff from that!

The ambient recordings mostly got mixed together with other recordings to make the environments you hear in the game, but a lot of the other stuff is pretty much just the edited recordings with some EQ and room reverb on them. The sound of flickering lights is a good example; I got one of those handheld work lights and put a CFL bulb in it, mostly unscrewed, and then kind of moved it about in the light socket to make that flickering sound. I edited those recordings, and put a little secret sauce on them, and then played them back randomly in-game.

One of the most useful recordings I got is actually an impulse response set I made at some decommissioned military bunkers kind of near here. There are these huge areas where these big guns used to sit that are basically concrete walls on three sides so it gives this very nice flutter echo/slapback thing that makes any sound run through it sound very much like it’s in an urban environment.

One memorable recording was of two enormous steaks with a thick layer of mayonnaise in between. Peeling those up slowly and slapping them down became the sound of a certain creature’s…mouth opening and closing.

RS: As mentioned, my Telinga Parabolic mic was used for difficult-to-isolate source like some bird species, used for creature source. It is not a microphone rig I use for many applications, but it does do one thing very well.

Mostly for me though, it was a lot of studio-based recordings. One memorable recording was of two enormous steaks with a thick layer of mayonnaise in between. Peeling those up slowly and slapping them down became the sound of a certain creature’s (no spoilers) mouth opening and closing. There were also many days of prop recordings for physics sounds, bolstered by material from Sweet Justice in the UK. Between us, Dave and I have enough gear to record anything anywhere, but we tend to focus on gathering material that will be useful for achieving specific goals and be most impactful for the player experience.

What was the most challenging level or scene to design sound for? What were your challenges?

DF: It’s gotta be Jeff and the distillery, right? I don’t think anything else took nearly as much time and effort and iteration as Roland put into that.

RS: I suppose so, yes. At a high level, what made it difficult is that we were trying to achieve a relatively complex set of goals in presenting a new creature and a modified approach to gameplay for the player, who we realized through testing had increased specificity in their expectations for sound content and level, on top of common development challenges like the systems we relied on being delicately balanced, changing underneath us, and content being WIP.

There were a number of conflicting but interdependent tasks the sound team had to take on. Testers expected to know where the creature was, direction and distance, at all times. They also expected to be clearly informed that they might be about to encounter or perform something that would be dangerous because it would result in an even louder sound. The sounds that attracted the creature had to make sense. The creature reacting to a sound with various levels of severity also had to be telegraphed.

This was on top of all the other tasks sound was already doing and has to do for an effective scene, particularly in a horror scenario like that one. This required a lot of iteration and teamwork across disciplines. Morasky, who nailed the music, myself and the rest of the team that worked on it are all very happy with the positive responses we have seen and read, and I should make clear that they designed the sound of that creature and the related sequences as much as I did.

‘Half-Life: Alyx’ Gameplay Video 1

Half-Life: Alyx was developed with the Source 2 engine. Was this a good fit for sound implementation and real-time processing?

DF: I think it was a really good fit, in large part because we made it that way. We didn’t start this project with the audio engine in its current form or anything close. A lot of work has happened in the last four years to make it the powerful tool that it is.

MM: The Source 2 audio engine was designed specifically to be an “open system,” as extensible and flexible as possible. Every Valve game currently using the Source 2 engine has distinctly different needs, both overall and how individual sound events are handled. This same flexibility was utilized heavily on Half-Life: Alyx as each artist developed tools and systems unique to their needs, then sharing and co-developing individual components for a vast yet cohesive set of tools and audio behaviors.

We didn’t start this project with the audio engine in its current form or anything close. A lot of work has happened in the last four years to make it the powerful tool that it is.

The system also has multiple forms of hierarchical inheritance, meaning that we were able to create some top-level systems that were inherited by all the other behaviors that utilize them. This made it relatively easy to create and manage our overall spatial/mix cohesion and signal flow right up to the end of development while limiting the need to address changes across the thousands of sound events in the game.

The audio engine has two different subsystems, one that handles game events, starts sounds, manages positions, spatialization, buss send levels, etc. The other handles higher frequency tasks like buffer summing, DSP, etc. That second “low level” system also came in very handy not only as “voice graphs” which Dave mentioned but also how we constructed the “main mix” graph. We utilized compression with limiting, dynamic buss sends and sidechaining to compressors as well as our slick dynamic EQ system to manage our per buss and overall levels to a degree that we haven’t been able to before.

What were some audio-related tools that were useful for this game, either proprietary or 3rd-party?

DF: I don’t know how much we want to give away about our internal tools here, but for me, the indispensable parts of our system were voice graphs, fixed rotation sound events, animated sound events, and the global stack/opvars. There are probably more but these are the ones that came to mind.

Voice graphs are (usually) simple in-game DSP chains that exist on a per-sound basis. So you could have a sound that uses a voice graph with a simple low-pass filter where the cutoff of that filter is tied to the distance to the player so two instances of the same sound would have different filter values based on where they were in the game. That’s a simple example, but you get the idea.

Fixed rotation: in order to create looping ambient sounds that sounded good and not glued to the player’s ears, we made a system for taking 4-channel sounds and positioning each of the four channels in the world surrounding the player.

…the indispensable parts of our system were voice graphs, fixed rotation sound events, animated sound events, and the global stack/opvars.

The rotation of the sounds is fixed, i.e. the player can rotate in place and the sounds don’t move, but they maintain a set distance from the player so if the player walks forward the sounds move with them.

Animated sound events: not exactly animated in the traditional sense, but we have a system for moving sounds around in the world given some set of parameters. This gets used subtly in ambient cases to give motion to things like insects or birds or non-diegetic sounds, but also less subtly for things like explosion debris.

Global Stack and Global Opvars: the global stack is a sort of singleton script that runs constantly, it mostly gets used to look at arrays of global values (global opvars) and set other global values based on them. Most of our ambient sounds look at the resulting values to determine if they should play and how loudly. A simple example is indoors vs. outdoors. We have a value that is “how outdoors is the player?” If they’re standing on the balcony at the beginning of the game that’s 100% outdoors, and if they’re in a subterranean tunnel system that’s 0% outdoors, but if they’re standing inside next to an open door that leads outside that’s maybe 60% outdoors. If they close the door maybe it’s now 25%. The “outdoor” sounds watch this value and respond accordingly, so they’re full volume and unfiltered at 100% but silent and very low-passed at 0%, and somewhere in-between for any other value.

RS: I would like to simply add that our tools are incredible, and make collaboration with programmers very straightforward.

What were some of your challenges in mixing this game?

RS: From a sound effects point of view, a lot of the game mixes itself because of our play testing and feedback gathering routine. This results in a lot of data and useful opinion points we can act on. Typical play testing will cover small parts of the game, resulting in narrow but deep mixes within a restricted group of sounds, for example mixing what fighting a group of Antlions sounds like, so we also have longer play throughs to identify wider mix considerations for which we could use broad stroke solutions. With such a small audio team, this is also helpful in identifying areas that may need some design attention.

Our audio teams tend to function much like Valve itself, very organically non-hierarchical with each artist managing their own contributions…

MM: Our audio teams tend to function much like Valve itself, very organically non-hierarchical with each artist managing their own contributions and how those contributions behave on the multiple axis of spatialization, levels, reverbs and EQ. That, combined with the sense of scale that VR requires, presented a particular challenge that meant continually (re)designing and adjusting the signal flow so that as each artist added their elements they could feel relatively confident that they were setting their levels as appropriately as possible.

We also surveyed and re-surveyed what output levels people had their system set to so we could narrow in on and all work at an ideal volume. The strider experience that happens in the first minutes of the game served as both a test for how large of a dynamic range we could achieve to help convey scale, as well as establishing an early benchmark for everyone to work to.

In terms of sound, what are you most proud of on Half-Life: Alyx?

DF: My answer is pretty nerdy, but here it is: at our old building we had this stairwell that connected a bunch of the floors and it ran right by the cafeteria space. At lunchtime, I used to start on that floor and walk up the stairs to my office, several floors up, and I used to love how the din of the lunchroom sort of faded down and got low-passed and by the time I got all the way upstairs I was just in this quiet space. We sort of have the same thing in the current space but it’s not as good. For this game, I really wanted to recreate that feeling in the ambience of leaving one area and slowly entering a new one, and there are a few places where I think it works really well. The door after the first outdoor combat in the Processing Plant map (don’t know what the public name of this map is) is one of my favorite examples. If you leave the door open and walk down that hallway towards the zombie who blows himself up with trip mines, you get this really nice transition from outdoors to indoors. See? I told you it was nerdy.

RS: The thing I’m personally happiest with is fairly nerdy too — C++ I wrote shipped in a game!

Sound-wise, I was watching a stream recently and when a Combine Dropship passed close overhead, the player gasped and the comments went wild about the sound, and they weren’t even experiencing it in VR with the proper panning and height.

I’m most impressed with…the overall artistic cohesion, technicality, power and dynamics we were able to achieve with a group of freely contributing artists.

Excitement and engagement like that are incredibly rewarding to see and I think for all of us that is more important than getting a cool sound effect idea in the game.

In general, we hit our audio goals and have continued a solid roll of creating useful tech and content to build from going forward. The Half-Life: Alyx team as a whole should be proud; everyone contributed to the final sound.

MM: I’d say the thing I’m most impressed with is the overall artistic cohesion, technicality, power and dynamics we were able to achieve with a group of freely contributing artists. It’s a very different sort of dance than that of a more traditional hierarchically organized team.

KT: I want to second what Mike said about artistic cohesion. I immediately thought of that when I read this question. We had five sound designers on this game but it sounds like one individual did all the audio. I don’t think that’s an easy feat to pull off. In fact, multiple times I thought one designer had done a piece of work only to find out it was another person. I’ve played many games where you can tell via style and worse, quality, that different people worked on different aspects of the game. That’s not a good thing. It ruins sonic cohesion in the product. I was amazed at how the game simply sounded great and flowed very naturally. Not once did something sound out of place in terms of quality and feel. It really was a great team full of extremely helpful people.

A big thanks to Roland Shaw, Dave Feise, Kelly Thornton, and Mike Morasky for giving us a behind-the-scenes look at the sound of Half-Life: Alyx and to Jennifer Walden for the interview!