As a sound designer in the AAA industry, I often come across a very common issue: repetition and certain frequencies will ruin the experience for me and others. We might not know it, but subconsciously over time we get fed up with repetition, monotony and frequencies that create dissonance in the overall soundscape.

We need to define the sweet spot between the boredom of monotony and the safety of recognition.

I noticed that though we try to create great experiences and moments in our games, we neglect to think about which sounds will be heard the most. It’s not the bigger moments or that special gun you fire every two hours that need to sound good. No – sounds such as footsteps, doors opening and closing, common interactions and the likes are heard much more often (depending on the game of course), and we all know there is nothing that’s more of a nuisance than recognizing a sound in one game that has been used in another, or as part of a motion picture soundscape. This also happens if a sound is used over and over again in the same game. We create listening fatigue through repetition, and we generate boredom from monotony. We need to define the sweet spot between the boredom of monotony and the safety of recognition.

It is of course very much an individual thing, and just because something is repetitive, it doesn’t mean it’s wrong or bad and should be changed. It is an individual decision that needs to be considered per project, per sound. This is why we created a “nuisance score system” to track down all bothersome sounds in our game and deal with them individually – straight up, it’s a way to label sounds that are irritating with a pre-made template that defines what is annoying.

And a short note: these are all just thoughts and opinions of mine to give you ideas of your own. This is not a “must follow and obey” piece of writing.

The theory of the nuisance score

Even the slightest nuisance counts subconsciously towards a greater combined nuisance score

The nuisance score was a system to create labels for sounds that sonically ruined the experience of our product, even though the specific sounds in question would sound fine on their own. It helped us find the sounds that were breaking the immersion rather than creating it, and we found that creating an immersive soundscape was much easier than preventing the immersion from breaking time to time – as even the slightest nuisance counts subconsciously towards a greater combined nuisance score, which eventually breaks our immersion and shortens the timespan we are willing to spend on the game.

Giving a nuisance score

This was a system we used when playing the game. We took notes without thinking of aesthetics or other things; any sound could be given a nuisance score, just because. We played various levels, making notes of which sounds were heard the most and which sounds would and should or should not stand out, as well as which sounds, when played often, had odd samples that would break the natural pattern. After each session we discussed which sounds achieved a higher score than the threshold we had determined.

This nuisance score helped us create a whole new list of “bugs” for us to fix, bugs listed as “New door system, to avoid repetition”, “Greater variety of footsteps to lower nuisance score” and so on. For example, doors opening and closing had just one sound for each door. This meant that if our protagonist was in a quiet place and opening several doors in a row, we would have a pattern of identical sounds playing right after each other.

We examined the cocktail party effect and other psychoacoustic phenomena, and how to avoid them, to decide how to fix the doors. Sounds that play too often or longer soundscapes that our minds decipher as irrelevant or non-carriers of information are filtered out of our focus, or they simply start to bother us whenever they play. A door that becomes annoying when played too often or a sound that we begin to completely ignore is just bad for business. And we didn’t want to make our doors sound any different, we were only trying to achieve a better sounding end product in terms of less repetition.

Think about how much you notice the sound of silence whenever your fridge turns off because you had been completely ignoring the fact that it was noisy just a few seconds ago

You may think that a sound that is ignored in our perceived soundscape doesn’t do anything, but think about how much you notice the sound of silence whenever your fridge turns off because you had been completely ignoring the fact that it was noisy just a few seconds ago. As much as this is an interesting phenomenom, it is also unwanted – we would much rather have full control of our players’ focus than lose control over the soundscape they perceive. If our players experience an “oh, that sound just disappeared” that’s +1 for the nuisance score.

[tweet_box]Useful game audio techniques to maintain immersion & prevent listening fatigue:[/tweet_box]

Solving and lowering a too-high nuisance score:

By layering and randomization

Any sound can achieve a nuisance score, and again it should be considered per sound. The solution to any nuisance score is just as personal. Some sounds need repetition, to provide that safety of recognition as mentioned earlier, instead of being randomized just for the sake of randomization. Such a solution can even create more of a nuisance than if it was removed (e.g. the coin in Super Mario, the exclamation mark in Metal Gear, etc. would all be more of a nuisance if they changed constantly rather than stayed as monotonous as they are).

In the above case with the doors, we decided to create a system that would go hand in hand with our prefab/template system, which allowed any generic door to be used over and over again by level designers, but still give us control over the sounds with automatic randomization. This was solved by splitting the sound into layers, and yes, this used more voices, but we agreed that a sound that plays for a quarter or half a second can definitely use two or three more voices – and if this was the deciding factor in our voice budget then the problem would most likely lie elsewhere.

The more variations we added, the more sensitive the system became to odd samples

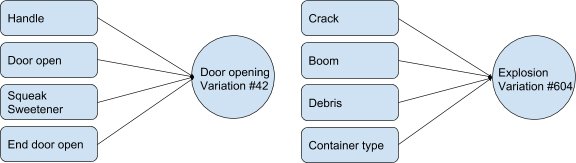

We split these sounds into the components of the door handle, the swing of the door, an optional swing sweetener and the end of the opening sound. Each was then mixed together in real time in the game, but during the creation process these were all mixed together. So with five variations of each sound we were able to achieve 125 variations, or 625 variations if the optional sweetener was used. This was by far enough so that by the time the same variation played again the players would never notice. Creating a variation of sounds like this proved very effective, yet we also discovered that the more variations we added, the more sensitive the system became to odd samples. The more parts and variations, the greater the risk became of noticeable patterns in the individual layers – so keep that in mind if you experiment with this yourself.

A bonus for this door system was that it fit extremely well into our prefab/template system. Level designers could use a lot of generic doors on their levels, and artists could add different shaders and effects to create specific graphical atmospheres, yet the assets could be the same in another level with a completely different atmosphere. So the possibility to alter each layer on each instance of a generic door proved very useful. A generic door used in a brightly lit, well-polished and clean surrounding could sound one way, but the exact same door in an old house with dirty surroundings could have a squeaky-sounding sweetener added to it or perhaps something else – and this system made it extremely flexible to alter any part of the sound for any door to fit our levels.

The same system was used for explosives. Previously, we had just three variations of explosions: small, medium and large. This received a very high nuisance score since it was possible to throw two identical explosives right after each other, and if those two sounds were identical it would quickly stand out as monotonous and achieve a high nuisance score. This was solved by layering all explosive sounds – small, medium and large still – but with some tools to figure out the size and material of the explosion, and what materials were around it. This created explosives that could consist of a small fire explosive sound with a different medium-sized container sound – from wood to metal to cardboard and so on – all put together with a random selection of five booms to create some kind of consistency.

Specific debris, depending on what was in the surroundings, each had a different attenuation and behavioral control depending on where the explosion occurred relative to the player, as well as strict voice control so some layers could overlap while others could not – like the generic boom could not, but the container sounds could. This easily generated hundreds of variations of explosions, not only solving the problem of when they happened right after each other but also giving each playthrough a bit of variety in case you blew the same thing up each time.

By frequency control

If any sound doesn’t fit into that musical theory its pitch will create some kind of dissonance

A rough example would be to have background music playing in Am (A minor) but all UI sounds created with an A# or Bb base note, which according to music theory would create dissonance. There is NO Bb in A minor. That would be the same as having an organ play a full Am chord and then spamming the A# piano key on top of it. Unless that is part of your aesthetic plan, that will sound really annoying and probably bad too, but I am not to judge if you think it sounds great. Dissonance is VERY personally and culturally determined. Regardless if your music is written to Western music theory and plays a specific chord, if any sound doesn’t fit into that musical theory, its pitch will create some kind of dissonance. It may or may not create a nuisance, but that is why we played our game and marked all the items and situations that generated a nuisance score.

Usually when I hear the use of Western music theory, I think of the “ordinary” Western system following the old tempered piano by Bach. It doesn’t have to be this system that you use, but it’s the one we hear the most often, so let’s stick to that for explanatory reasons. In praxis you can use any system as long as you stick to it and don’t create what may be defined as dissonance in it.

Either the UI sounds or the music can be changed to fit the other, but the main problem is with ambient sounds and drones that have some kind of pitch that isn’t in tune with the music. This can be solved by pitch shifting either to fit the other, but sometimes the way the overtones in the ambient sound are used, it may not be possible to actually get in sync. More often than not it is both easier and faster and a better result to create a new asset from scratch, but it can also be solved by using a notch filter on the ambient sound to force a base frequency to peak so you can control the volume of all the correct overtones – just a few dB and you will have altered the pitch of the sound to correctly work with any key desired. This can also be used to take away specific frequencies if your sound has all the right frequencies and the reason it’s ruined is because there is one too many.

All the players who played the out of tune version quit the game a minute or two before the in tune players.

Music in Am and a single hit of an A# UI sound might not do much, but if we are talking a whole score in Am and all room tones are in A# then you will have a dissonant experience while playing the game which will generate a nuisance score. We experimented a bit with this, not a full scientific experiment, but I had some friends play a small demo level with basic UI elements. It wasn’t anything fancy, but the result did make me think that this was needed (so if you want to go nuts with this as a research project, be my guest and go nuts). We had 19 players, 10 had a small level where the music and ambient sound were out of tune, and 9 played one in which they were in tune. They played until they felt like quitting and then said why they quit. The level was pretty bad so that didn’t exactly help, but all the players who played the out of tune version quit the game a minute or two before the in tune players.

A good start is also knowing which frequencies are associated with which notes and to do some spectral listening training to get to know which frequencies sound like what. A good exercise is to associate specific frequencies with words or images, like if there is too much 8kHz in something and it reminds you of a leaking gas pipe then you are just about right. Eventually you can train your ear to discern within a Hz of where some tones actually are. Your Am music score (with A, C and E notes played beautifully) and your room tone in A as well (the harmony here is just magical) doesn’t work well with sounds that have obvious and audible spikes at 123.47 Hz if they are in any way perceived as pitches.

Here is a chart with octaves, notes and frequencies for your convenience – all in Hz. So if you are ever in doubt about which peak to remove or add – check the chart.

| Octave 0 | Octave 1 | Octave 2 | Octave 3 | Octave 4 | Octave 5 | Octave 6 | |

|---|---|---|---|---|---|---|---|

| C | 16.35 | 32.7 | 65.41 | 130.81 | 261.63 | 523.25 | 1046.5 |

| C# | 17.32 | 34.65 | 69.3 | 138.59 | 277.18 | 554.37 | 1108.73 |

| D | 18.35 | 36.71 | 73.42 | 146.83 | 293.66 | 587.33 | 1174.66 | D# | 19.45 | 38.89 | 77.78 | 155.56 | 311.13 | 622.25 | 1244.51 | E | 20.60 | 41.2 | 82.41 | 164.81 | 329.63 | 659.26 | 1318.51 | F | 21.83 | 43.65 | 87.31 | 174.61 | 349.23 | 698.46 | 1396.91 | F# | 23.12 | 46.25 | 92.5 | 185 | 369.99 | 739.99 | 1479.98 | G | 24.50 | 49 | 98 | 196 | 392 | 783.99 | 1564.98 | G# | 25.96 | 51.91 | 103.83 | 207.65 | 415.3 | 830.61 | 1661.22 | A | 27.5 | 55 | 110 | 220 | 440 | 880 | 1760 | A# | 29.14 | 58.27 | 116.54 | 233.08 | 466.16 | 932.33 | 1864.66 | B | 30.87 | 61.74 | 123.47 | 246.94 | 493.88 | 987.77 | 1975.53 |

Every sound is different in every situation and mix, so this is not a rule that you should follow blindly, but it’s a good start if you are having trouble figuring out why your mix has issues in certain places – why it is such a nuisance!

By removing odd samples

If a step stands out or is in any way distinguishable from the others, then the player … will automatically start to take note of this pattern

The more we add randomization, or hundreds of assets, or even generate sounds for our steps, the more sensitive our system becomes to having one combination which may create a nuisance in the pattern. If a step stands out or is in any way distinguishable from the others, then the player and the psychoacoustic processes in his or her brain will automatically start to take note of this pattern. Not only will this be physically annoying and probably irritate them enough to feel slightly tortured, it will also create a very high nuisance score – and this torture is what the nuisance score is trying to help you avoid.

Odd samples do not only occur in footsteps. Any sounds that require a pattern – footsteps being a great example of such – can have this issue. When recording material for a game, we often handle this much differently than when creating sounds for linear media, such as motion picture. We do not have a single frame where we can decide that here shall the perfect footstep play, we need to create systems that allow for our players to move around freely.

So when recording Foley for the non-linear parts of our game – footsteps are a very good example here – we may need ten steps that are all variations but all very similar. This is different from recording that one perfect step for one frame. The same thing goes for any other kind of sound that needs to be played over and over again. With sword fighting, we don’t need one version of a good swing with a sword, we need ten variations that are almost indistinguishable from one another, since the same sound that’s constantly spammed into our ears would increase the nuisance score.

An odd sample can also be a good-sounding sample. It can be any kind of sound, even a well-produced one, that just doesn’t fit into the soundscape. Or it can be a sound that is oddly placed or stands out because of its origin or production style, which is a problem if you use different recording setups for the same type of sound – like recording

footsteps with one type of microphone and later adding some footsteps that you recorded with a different microphone or in a different room.

An odd sample can also be a good-sounding sample. It can be any kind of sound, even a well-produced one, that just doesn’t fit into the soundscape

The same goes for dialogue if the equipment has a watermark which you cannot hear until a sound recorded with a different one is suddenly used.

It can also be a sample that stands out – even if it may be fun to put in as a joke, it doesn’t always have the desired effect. A perfectly-timed soundscape with immersion that sends you to a different planet and a new place in space and time can easily be ruined if the Wilhelm Scream is suddenly heard in the soundscape. It’s like the immersion timeline takes a detour and we are ripped out of the fantasy.

First, it’s a very easily recognized sound, but it also doesn’t really belong in there with all your other sounds that may be produced completely differently. As fun as that sound may be to use, think of the actual effect of doing so – and as always, it is your own free aesthetic choice to use it and if you do I am not pointing fingers at your game or your creative freedom to do so – but seriously, think it over – and think of the golden rule that just because a sound is recognized and has been heard before by your player, the link between that sound and something else might not benefit your soundscape or the immersion you might be trying to achieve. Recognition does not always equal the safety of recognition as mentioned earlier.

Game object orientation control

If your scene does not contain specifically that combination of things in the graphics or at least has the illusion of the existence of these things, then we break the immersion of the moment

Another issue that can easily create a nuisance score is if the sounds coming from the game world seem detached from the actual objects they represent. For example, if there is an ambient background playing as a 2D background loop, which contains birds, dogs and people talking, if your scene does not contain specifically that combination of things in the graphics or at least has the illusion of the existence of these things, then we break the immersion of the moment.

The same thing goes if a sound is originating from a game object placed somewhere, but the origin of the sound is placed just off that place and perhaps sounds like it’s coming from slightly behind or in front of the object. This also applies if a 3D sound is located where it’s possible to run very closely around its physical location, making it pan extremely hard – this also breaks immersion. It basically follows many of the rules and observations made by R. Murray Schafer and Henry Torgue in their Guide to Everyday Sounds (read it – it’s great) about how a sudden change in environment or too fast a decrescendo of any sound will make it stand out and break the soundscape even after it’s gone.

A room tone which is just a static noise may not experience this sort of immersion-breaking effect as much as an ambient sound with all sorts of patterns and combination of sounds in it. Break the loop into smaller pieces and randomize them so you do not play the same loop over and over again. This will remove the issue mentioned above and the issue of creating a recognizable loop because of its pattern.

Another thing is to simply make sure your ambient sounds fit more specifically with what you want to represent in the level. A dog barking in the background every 20 seconds because that’s the length of your loop is a very clear pattern and also an issue if there are no dogs to be seen and the dog in the loop is far too audible. Building an ambience out of several elements and/or lots of 3D sounds instead of 2D sounds can solve quite a few of these issues and remove the patterns mentioned above and lower the nuisance score generated by all these issues.

Find your niche, and stay there – an immersion comfort zone:

Create an immersion comfort zone

If your game is meant to sound realistic, even if it is everything but realistic, you have still created an immersion comfort zone. Before starting a project, try to define your immersion comfort zone and stay inside it – going outside it will break immersion and create a nuisance score that needs to be resolved. It can be difficult to define one, but if you stay inside the zone (the aesthetic fantasy of your game) then you are one step closer to not having odd samples.

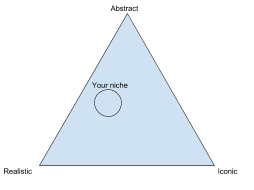

I always explain this as a triangle, which has corners marked as realistic, abstract and iconic. Most games will never really be fully realistic but it’s up to you to define where your aesthetics are and how big a zone you want to have. There isn’t a rule and I have never worked by a rule of how big the zone should be – it’s simply a zone that I, or we in an audio department, have decided.

This gives us an immersion comfort zone, meaning that if we suddenly put in a sound which is totally iconic and stands out from everything else, we have created a nuisance

Let’s define a zone in a fictive game that we want somewhere in between realistic and abstract, but none of our sounds are very iconic or cartoony for any character – no specific leitmotifs for characters or objects. This gives us an immersion comfort zone, meaning that if we suddenly put in a sound which is totally iconic and stands out from everything else, we have created a nuisance. A cartoon spring going boing because a character jumps in our realistic-looking game may be a fun gimmick, but in several projects I have noticed this does more harm than good to the overall experience of the game.

Consider it like creating your own little acoustic ecology or audio world, just like the real world, where things in it sound a specific way. Don’t jump off that path or break the ecology, your own little acoustic niche. This goes well with the randomization solution mentioned earlier, because as long as your explosion, door or footstep, which has all these randomizations added to it, is the same kind of sound in the end, then you have succeeded. In short, splitting a sound into layers, randomizing them, etc., work only if the end result is same but different. Hundreds of variations of layers of an explosion only work if when all these are put together it still sounds like an explosion – and not just any explosion, an explosion that fits into your immersive comfort zone and acoustic world of your current project.

As long as the end product is perceived as the same type of sound – you have created a really powerful tool to rid your game of repetition and potential sounds that can create a hidden nuisance.

Real world immersion breaking experience and a max nuisance score

So with gamification, this story explains how wrongly-placed emitters and unnatural behavior in a soundscape can do the opposite of making us feel connected, how sounds being used correctly except in one place can create a disconnect between places and become a nuisance because of it – creating a high nuisance score just as they are and certainly when summed together in the final product.

So what is the story? Here goes:

I went with my wife to see an acrobatic circus-like show in Copenhagen, Denmark. We were seated quite well, maybe at an angle of 10 degrees from the center of the stage. The only speakers in the setup were placed at the far back of the stage in a stereo setup. The speakers were very widely spaced and quite far away. This meant that absolutely no direct sound was able to reach us, so instead of experiencing the live musicians and singers on stage as sound coming directly from them, the sound came from the speakers at the back of the stage – making the audience at our seats feel totally disconnected from the actual show.

Another problem was that the speakers were placed too wide and poorly so we could only hear the speaker on our side of the stage, meaning that we were having a mono experience as well. Everything we looked at was on the stage, but all we could hear was sound coming from a completely different direction.

As a sound designer I kind of quickly noticed and took note of why I was disconnected from the show and how it bothered me, but during the intermission, my wife told me that she felt disconnected too. I tried to quickly explain it, and since I made it into “because of psychoacoustics, you…” I was cut off.

I noticed other people in the audience standing up and saying similar things, such as feeling disconnected. They couldn’t really feel the performers and so on, and they as well as my wife, didn’t know why. This made me realize that if the stage had been set up better or delayed monitors had been placed closer to us, this would have given us a much more immersive experience. When the show was fully over, the guy sitting next to me said, “Meh. 3 out of 5,” he said. “I just couldn’t get into it.”

Think about that – with better speaker placement and not breaking the immersion like that and not creating the massive nuisance score with this setup, they might have gotten a 4 out of 5 by this guy. That’s a pretty big change in a review score, just because of a bad speaker placement or poor setup of other sound-related things, or simply because the setup was done by a person who didn’t know how psychoacoustics and directional perception works.

If making sure your game sounds good and doesn’t break the immersion is one of the differences between a 3 and a 4 out of a 5-star review, that’s the difference equivalent to an 80 and 90 Metacritic score. That should be a rock-solid argument to anyone in production who doesn’t understand audio or believe in audio being such an important factor. If the discussion of sound design and its importance in games isn’t enough to convince a producer or director, they certainly should understand things like: “from a 3 to 4-star review”, “higher Metacritic score”, sustain players for longer, and better end product.

“(Dis-)Harmony in movement: effects of musical dissonance on movement timing and form” by Naeem Komeilipoor, Matthew W. M. Rodger, Cathy M. Craig, and Paola Cesari

“Consonance and Dissonance” by Sibelius Academy

“Why dissonant music strikes the wrong chord in the brain” by Philip Ball

“Bioacoustics Theories” by Almo Farina

“Invasion of the acoustic niche: variable responses by native species to invasive American bullfrog calls” by Camila Ineu Medeiros, Camila Both, Taran Grant, and Sandra Maria Hartz

“Biological invasions and the acoustic niche: the effect of bullfrog calls on the acoustic signals of white-banded tree frogs” by Camila Both and Taran Grant

“Scientists are recording the sound of the whole planet” by Josh Dzieza

“Acoustic ecology” by Wikipedia

Thanks for reading and I hope you enjoyed it.

For more material consider reading my Ms. Thesis about informant audio in video games – you can download it here. It’s also a bit of a personal opinion on how to link sound design to game design, and vice versa, to increase communication between game and player through the use of sound.

I also write and blog and post stuff on my Patreon – you don’t need to pay anything, it’s just an easy place to follow everything I post, so check it out if you like. To learn more about me, you can also visit my website. That’s it for my personal experience and opinions today – I hope you enjoyed reading this blog.

By Bjørn Jacobsen, MSc. IT. Audio Design, Aarhus University, Ba. Electronic Music Composition, Royal Academy of Music, Danish Institute of Electroacoustic music (DIEM)

A big thanks to Bjørn Jacobsen for sharing his insight into how to maintain immersion – and reduce irritation – in your sound design!